Best AI SEO Tools 2026: 6 Tested for Brand Visibility

We compared 6 best AI SEO tools on real brand-tracking workflows across ChatGPT, Perplexity, Gemini, and Google AI Overview. Here's what actually works in 2026.

Search is splitting in two. Half the queries that used to land on a Google SERP now resolve inside an AI answer, with no click, just a citation. AI SEO is the discipline of getting cited in those answers. The mechanics differ from traditional SEO, the measurement layer differs, and each surface (ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot) rewards different signals.

4.7 on G2No credit card required.

curl -X POST https://api.cloro.dev/v1/monitor/chatgpt \

-H "Authorization: Bearer sk_live_your_api_key_here" \

-H "Content-Type: application/json" \

-d '{

"prompt": "best project management software for remote teams",

"country": "US",

"include": {

"markdown": true,

"sources": true

}

}' {

"success": true,

"result": {

"text": "...",

"sources": [],

"markdown": "..."

}

} ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot, Grok. Each surface ranks citations differently, so AI SEO means winning across the cluster rather than optimizing for one. cloro's API returns the same response shape on every surface, which lets you compare them like-for-like.

Four shifts every AI SEO program has to internalize. They aren't tactics; they change what "winning" means. Tools (cloro included) only matter once you've named the right game.

Traditional SEO measures rank → click-through → conversion. AI SEO measures whether your domain ends up cited inside an AI answer, since most users never click through. "Position 1" loses meaning when there's no SERP. The metrics that matter are citation rate (what fraction of queries cite you) and share of voice against competitors on the same prompt set. Both come from sampling real responses.

Google ranks pages on a corpus index. AI engines retrieve passages, then synthesize an answer. The unit being scored is no longer the page; it's the citable passage. Pages with strong retrieval signal (clean factual claims, schema markup, primary-source data, citable structure) get pulled in even when their domain authority is modest. Pages optimized only for traditional ranking don't.

ChatGPT (Bing + OpenAI's index), Perplexity (Sonar + crawl), Gemini (Google's grounding), AI Overview (Google's classic index + Gemini), AI Mode (live Google), Copilot (Bing), Grok (live X + web). Each retrieves and ranks independently, and citation overlap on the same prompt is consistently under 30%. AI SEO is seven parallel optimization problems, not one.

Search Console, Ahrefs, Semrush, GA4: none of them track AI citations. The signal AI SEO operates on (which prompts cite you, which passages got pulled, how that shifts week-over-week) lives nowhere in the existing SEO stack. Either you build a sampling pipeline against the real engine APIs, or you fly blind. cloro is the measurement layer that makes the rest of AI SEO operable.

Same auth, same response shape. Fan out one prompt across the engines that matter, classify by domain, compute citation rate. Productized version →

import requests

# Same auth, same payload shape — switch the engine in the URL.

ENGINES = ["chatgpt", "perplexity", "gemini", "aimode", "copilot"]

for engine in ENGINES:

response = requests.post(

f"https://api.cloro.dev/v1/monitor/{engine}",

headers={

"Authorization": "Bearer sk_live_your_api_key_here",

"Content-Type": "application/json"

},

json={

"prompt": "best project management software for remote teams",

"country": "US",

"include": {"markdown": True, "sources": True}

}

)

print(engine, response.json()["result"]["sources"]){

"success": true,

"result": {

"text": "For remote teams, the most-recommended project management tools are Asana, Linear, ClickUp...",

"markdown": "For remote teams, the most-recommended project management tools are...",

"sources": [

{

"position": 1,

"url": "https://asana.com/use-cases/remote-work",

"label": "Remote work — Asana",

"description": "How distributed teams stay aligned with Asana's project boards and timeline view..."

}

]

}

} Pick a plan that fits your volume. Price per credit drops as you scale.

Credit cost per request varies by provider. The rates below apply to async/batch requests; sync requests add a +2 credit surcharge.

Google News uses the same pricing as Google Search.

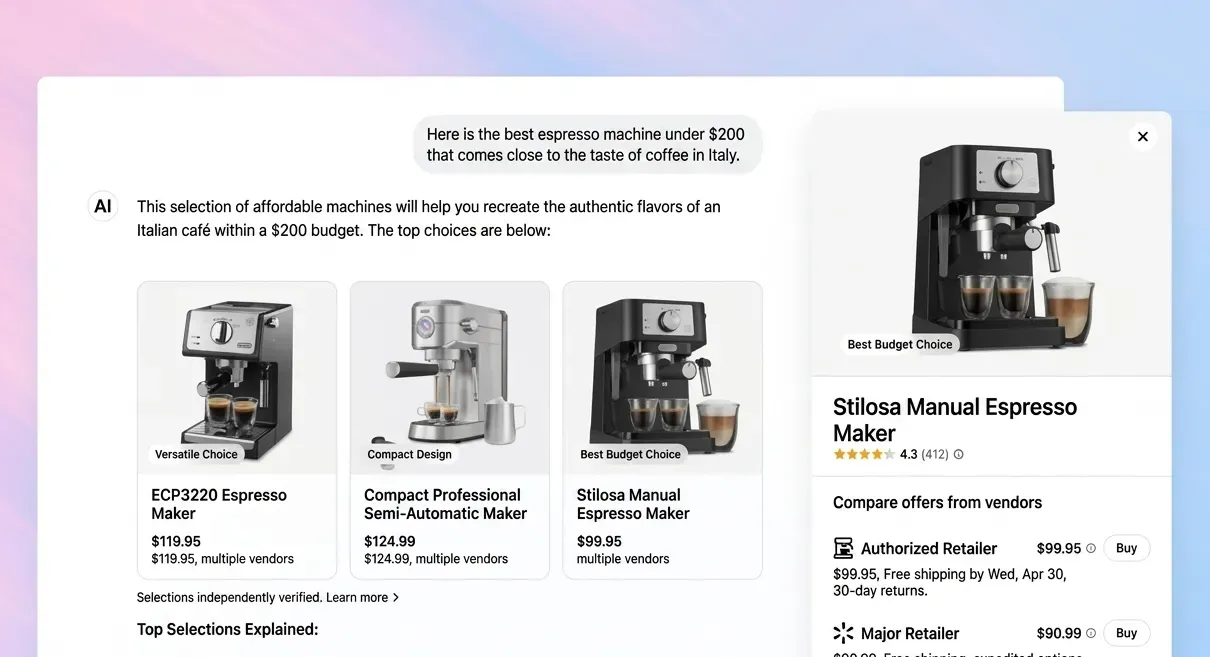

AI SEO is the practice of getting your domain cited inside AI-generated answers (ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot, Grok), as distinct from ranking on a Google SERP. The mechanics overlap (E-E-A-T, structured data, authoritative sourcing), but the measurement layer is different (citation rate, not rank), the unit being scored is different (passage, not page), and the surface is fragmented across seven engines that retrieve and rank independently.

GEO is a sub-discipline of AI SEO, specifically the practice of optimizing content for citation in AI answers. AI SEO is the broader category that also includes measurement (mention rate, share of voice), competitive analysis, prompt-set strategy, and the cross-engine view. Think of GEO as on-page work and AI SEO as the program. cloro hosts a deeper page on the GEO discipline at Generative Engine Optimization.

Not yet, and probably not for a while. Traditional SEO still drives the majority of branded and transactional traffic. AI SEO is where the *opportunity* is greatest in 2026 because the discipline is young, the SERP is unsettled, and citation signals are still being calibrated by the engines themselves. Most successful programs run both: same content team, same authority signals, with a parallel measurement loop for AI citations.

Five things compound. (1) Citable structure: clear claims, defined terms, primary-source data the LLM can lift verbatim. (2) Authority anchors: being referenced by sources the engines already trust (Wikipedia, .gov, .edu, top-tier publications). (3) Schema markup: Article, FAQPage, Product schema gives retrieval extra signal. (4) Freshness: recent updatedDate beats stale content for time-sensitive queries. (5) Topical coverage: comprehensive cluster pages outrank thin one-off posts.

Three pieces. (1) A target prompt list of 50–200 long-tail informational queries where your buyers are in research mode. (2) A measurement loop that samples those prompts daily across the engines that matter, parses `sources[]`, classifies by domain, and tracks citation rate over time (this is what AI Visibility Tracking productizes). (3) A content tuning loop: where you're not cited, identify the cited domains, study their structure, fix yours. Iterate weekly.

Right, and that's the structural gap the measurement layer has to fill. Every AI engine returns its raw response (text, citations, sources, engine-specific objects) on its public API surface. AI SEO measurement is a sampling pipeline that hits those APIs, parses the responses, and aggregates citation data into the metrics your team already understands (mention rate, share of voice, competitive position). Either you build that pipeline or you use one. cloro is the API layer; full-stack hosted dashboards exist for teams that don't want to build.

Volume-weighted: ChatGPT (~800M weekly users) and AI Overview (renders on most informational Google SERPs) are the must-cover surfaces. Start there. Perplexity (~20M weekly users, heavily research-mode) is high-value for B2B and technical queries. Gemini and Copilot are growing but lower-volume; AI Mode is Google's bet for the next phase. Grok matters for real-time / news-adjacent queries. Cover ChatGPT + AI Overview minimum, then expand from there.

We compared 6 best AI SEO tools on real brand-tracking workflows across ChatGPT, Perplexity, Gemini, and Google AI Overview. Here's what actually works in 2026.

AI search tracking in 2026 means monitoring your brand across 7+ engines, not just ChatGPT. Here's the practitioner's playbook — what to measure, how often, and which tools work.

Should you build AI search visibility tracking in-house or buy a platform? Honest cost breakdown — engineering hours, data infrastructure, and the break-even point.

AI SEO without measurement is guesswork. Start with AI Visibility Tracking for the productized measurement layer, or Generative Engine Optimization to go deeper on the GEO discipline.