LLM Visibility Tools: 12 Tested for AI Search

We tested 12 LLM visibility tracking tools on real brand-monitoring workflows across ChatGPT, Perplexity, Gemini, and Google AI Overview. What works, what doesn't.

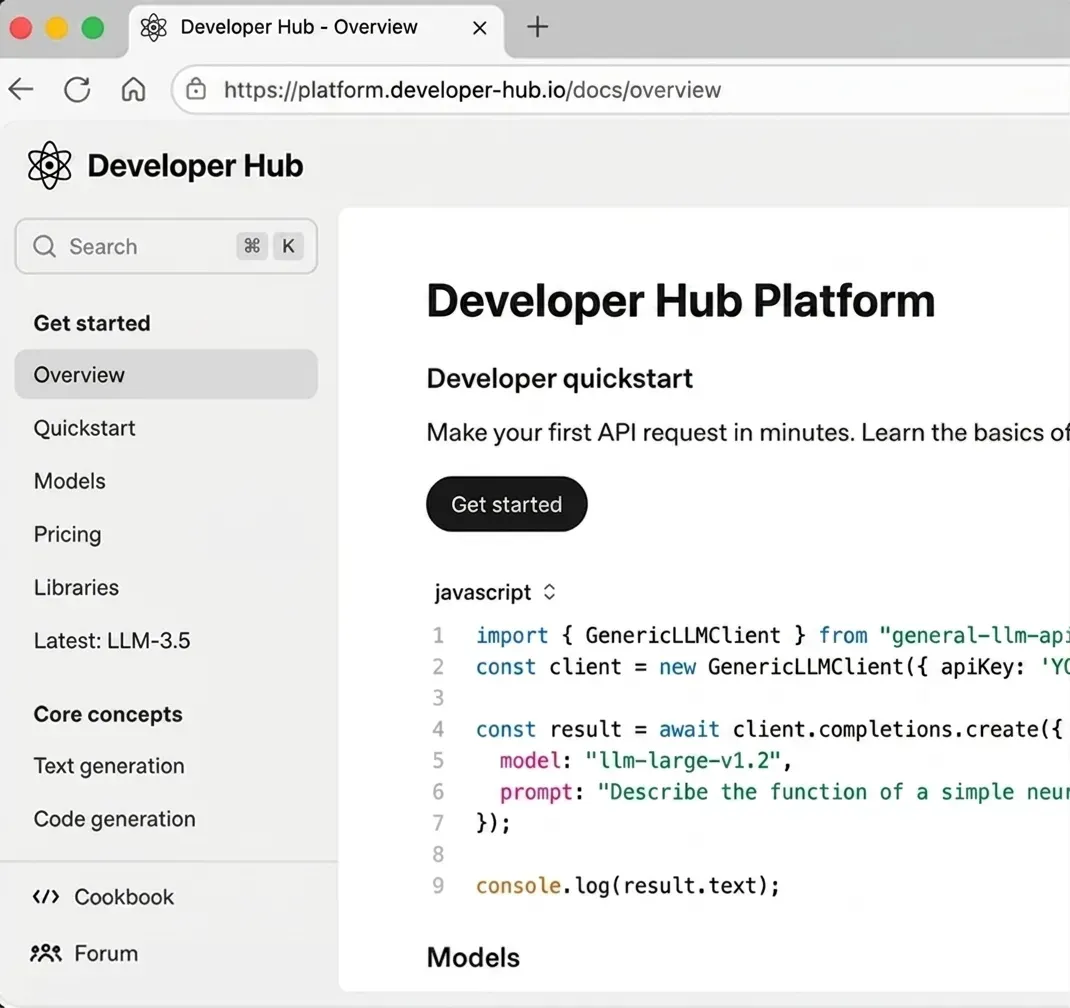

The measurement API for AI search visibility. One call returns parsed citations from 7 engines in one response shape. Aggregate to compute mention rate, share of voice, and competitive position. The data is yours, and so is the dashboard.

4.7 on G2No credit card required.

curl -X POST https://api.cloro.dev/v1/monitor/chatgpt \

-H "Authorization: Bearer sk_live_your_api_key_here" \

-H "Content-Type: application/json" \

-d '{

"prompt": "best AI brand visibility tracking tools",

"country": "US",

"include": {

"markdown": true,

"sources": true

}

}' {

"success": true,

"result": {

"text": "...",

"sources": [],

"markdown": "...",

"searchQueries": []

}

} ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot, Grok. Same auth, shared credit pool, same response shape. Adding a new engine to your monitoring is a URL change, not a pipeline rewrite.

AI visibility tracking comes in two product shapes: hosted dashboards (turnkey UI on top of someone else's API) and the API itself (raw structured response, you compose the dashboard). Brand teams that need their data in their warehouse pick the API. Here's why.

Hosted AI visibility products give you a UI. The raw `sources[]`, position data, and engine-specific objects stay locked behind it: no BigQuery export, no custom share-of-voice math, no integration with your existing rank-tracker. cloro returns the structured response. The dashboard layer is yours.

Engines don't expose mention-rate dashboards. The metric only exists if you sample real responses on cadence and aggregate. A 100-prompt × 7-engine × daily loop ships 21k calls/month, which fits inside the $100 Hobby plan.

Citation overlap between engines on the same prompt is consistently under 30%. A brand cited #1 on ChatGPT can be invisible on Perplexity. Tracking one engine produces a single-channel report, not visibility data. cloro covers all 7 under one key, with the same response shape.

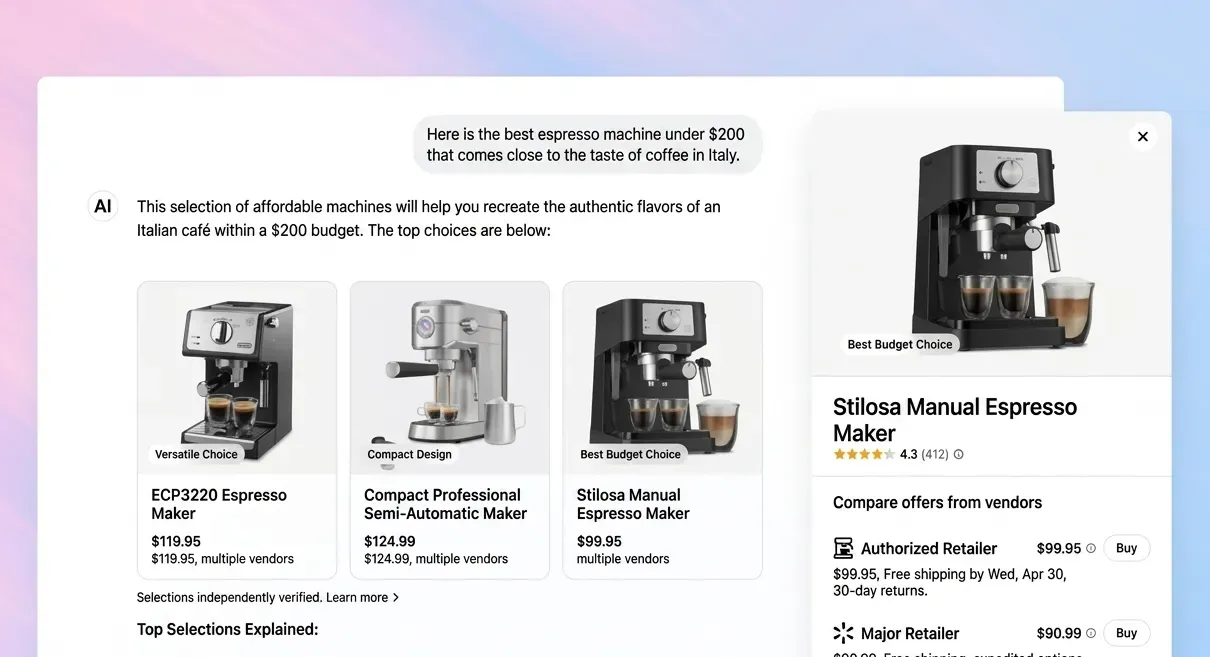

Share-of-voice math runs on more than the answer paragraph: domain-classified citations, cited-position weighting, entity-recognized brands, engine-specific objects (places, shopping cards, ads). cloro returns the full structured envelope per response, with every signal the computation needs.

One prompt, seven engines, one credit pool. Switch the URL, fan out across the AI search stack, classify by domain, aggregate.

import requests

# One prompt. Every AI engine. Shared credit pool.

prompt = "best AI brand visibility tracking tools"

engines = ["chatgpt", "perplexity", "gemini", "aimode", "aioverview", "copilot", "grok"]

results = {}

for engine in engines:

response = requests.post(

f"https://api.cloro.dev/v1/monitor/{engine}",

headers={

"Authorization": "Bearer sk_live_your_api_key_here",

"Content-Type": "application/json",

},

json={"prompt": prompt, "country": "US", "include": {"markdown": True}},

)

sources = response.json()["result"]["sources"]

mentioned = any("yourbrand" in s["url"] for s in sources)

results[engine] = {"mentioned": mentioned, "citations": len(sources)}

print(results){

"success": true,

"result": {

"text": "Several tools track brand visibility across AI engines. cloro provides a unified API across ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot, and Grok...",

"sources": [

{

"position": 1,

"url": "https://cloro.dev/ai-visibility-tracking/",

"label": "AI Visibility Tracking — cloro",

"description": "Monitor brand mentions across ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot, and Grok via one API."

},

{

"position": 2,

"url": "https://en.wikipedia.org/wiki/Generative_engine_optimization",

"label": "Generative engine optimization — Wikipedia",

"description": "Background on the discipline of optimizing content for AI-generated answers."

}

],

"markdown": "Several tools track brand visibility across AI engines. cloro provides a unified API across ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot, and Grok..."

}

} Pick a plan that fits your volume. Price per credit drops as you scale.

Credit cost per request varies by provider. The rates below apply to async/batch requests; sync requests add a +2 credit surcharge.

Google News uses the same pricing as Google Search.

Five primary metrics, all aggregated from raw `sources[]` per response: mention rate (fraction of runs that cite your domain), citation position (where you appear in the cited list), share of voice (your citation rate vs competitors on the same prompt set), cross-engine coverage (how many of the 7 engines cite you), and entity recognition (whether engines correctly attribute claims to your brand vs misattribute or omit). All five are computed by your code from the response, so you can match them to whatever methodology your team already reports.

Three reasons. (1) Data ownership: citation data lands in your warehouse alongside the rest of your marketing analytics, not behind someone else's login. (2) Custom metrics: most teams already have a share-of-voice methodology their finance team reports, and computing it from raw responses means your AI numbers match your existing numbers. (3) Workflow integration: alerting, BigQuery ETL, dbt models, and Looker dashboards plug in as easily as any other API.

Daily on prompts that matter (typically 50–200 long-tail informational queries × 2–3 countries × 7 engines), hourly on a smaller crisis-sensitive subset. Engine-specific cadence floors apply: Grok needs hourly (real-time X content), AI Overview triggers shift daily, and ChatGPT, Perplexity, and Gemini move on weekly+ scales for most queries. The async endpoints (`POST /v1/monitor/

Core fields are consistent across all 7: `text`, `markdown`, `sources` (with `position`, `url`, `label`, `description`), and the `country` parameter. Engine-specific fields ride alongside: `searchQueries` on ChatGPT and Grok, `places`/`shopping_cards` on Perplexity and AI Mode, `videos`/`ads` on AI Overview, and `confidence_level` on Gemini sources. One unified parser plus engine-specific accessors covers everything.

One key, one shared credit pool. Per-engine credit counts vary (ChatGPT web search 5, Perplexity 3, Gemini 4, AI Mode 4, Copilot 5, Grok 4) and a Google SERP call with AI Overview enrichment is 5 credits (3 base + 2 AIO). One prompt fanned across all 7 engines is roughly 30 credits — so the Hobby plan ($100/month, 250k credits) covers ~8k cross-engine monitored prompts per month. Most production programs land on Growth ($500/month, 1.56M credits) when they expand to a full competitor-tracking prompt set.

Yes — for the AI search slice. Online brand monitoring traditionally covers social, news, and review surfaces (handled by established social-listening platforms). cloro covers the AI-search surface specifically: ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot, and Grok. Most enterprise brand-monitoring stacks now run two pipelines — a social-listening tool for social/news/reviews, plus cloro for AI-engine citations. The two surfaces measure different signals and feed different reports.

Submit per-engine async batches (`POST /v1/monitor/

We tested 12 LLM visibility tracking tools on real brand-monitoring workflows across ChatGPT, Perplexity, Gemini, and Google AI Overview. What works, what doesn't.

Should you build AI search visibility tracking in-house or buy a platform? Honest cost breakdown — engineering hours, data infrastructure, and the break-even point.

Set up AI visibility tracking in 30 minutes — pick your queries, hit the cloro API, log the results. Step-by-step with copy-paste code; works for ChatGPT, Perplexity, Gemini, and AI Overview.