What is Generative Engine Optimization (GEO)?

The complete guide to optimizing your content for AI search engines like ChatGPT, Perplexity, and Google AI Overviews.

GEO is what SEO becomes when the search result is an AI-generated answer instead of a list of links. You structure content so AI engines cite your domain. Authority signals carry over from SEO, but the unit is passages instead of pages and the measurement is citation rate instead of rank.

4.7 on G2No credit card required.

curl -X POST https://api.cloro.dev/v1/monitor/chatgpt \

-H "Authorization: Bearer sk_live_your_api_key_here" \

-H "Content-Type: application/json" \

-d '{

"prompt": "what is generative engine optimization",

"country": "US",

"include": {

"markdown": true,

"sources": true

}

}' {

"success": true,

"result": {

"text": "Generative Engine Optimization (GEO) is...",

"sources": [],

"markdown": "...",

"searchQueries": []

}

} ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot, and Grok each retrieve and rank citations independently. A page cited on ChatGPT can be invisible on Perplexity for the same prompt. GEO without cross-engine measurement is single-channel optimization.

Four mechanics every GEO program internalizes. They aren't tactics; they're the structural reasons GEO exists as a separate discipline from SEO.

Google indexes pages whole. AI engines retrieve passages (short sections) and synthesize an answer. The unit of competition is no longer the article; it's each citable claim inside it. GEO content is structured for passage extraction: clear claims, primary-source data, and schema markup that delineates each unit.

Position 1 means nothing when there's no SERP. The measurable GEO metrics are citation rate, citation position, share of voice, cross-engine coverage, and entity recognition. All five come from sampling real responses on real prompts.

SEO teams have Search Console, query reports, and click-through data. AI engines ship none of it: no dashboards, no analytics APIs, no citation logs. The only way to operate GEO is to sample real responses on a fixed prompt set, parse the citations, and aggregate over time. cloro productizes that loop.

What helps GEO citation overlaps with SEO: clean claims, primary-source data, schema markup, authority anchors, a recent updatedDate. What's distinct is retrieval-friendly structure: passages an LLM can lift verbatim, defined-term glossaries, FAQ schema, comparison tables. Same content team, different output formats per page.

A production GEO measurement loop is one prompt list × N engines × daily cadence × diff over time. Here's the inner loop. The full measurement product lives here.

import requests

# The GEO measurement loop: target prompts × engines × cadence.

queries = [

"what is generative engine optimization",

"best AI brand monitoring tools",

"how to optimize for ChatGPT citations",

]

engines = ["chatgpt", "perplexity", "gemini", "aimode"]

for query in queries:

for engine in engines:

response = requests.post(

f"https://api.cloro.dev/v1/monitor/{engine}",

headers={

"Authorization": "Bearer sk_live_your_api_key_here",

"Content-Type": "application/json",

},

json={"prompt": query, "country": "US", "include": {"markdown": True}},

)

sources = response.json()["result"].get("sources", [])

cited = any("yourdomain.com" in (s.get("url") or "") for s in sources)

print(f"{engine} | {query[:40]}: cited={cited}"){

"success": true,

"result": {

"text": "Generative Engine Optimization (GEO) is the practice of optimizing content for citation in AI-generated answers...",

"sources": [

{

"position": 1,

"url": "https://cloro.dev/generative-engine-optimization/",

"label": "Generative Engine Optimization — cloro",

"description": "Definitional guide to the GEO discipline."

}

],

"markdown": "Generative Engine Optimization (GEO) is the practice of optimizing content for citation in AI-generated answers..."

}

} Pick a plan that fits your volume. Price per credit drops as you scale.

Credit cost per request varies by provider. The rates below apply to async/batch requests; sync requests add a +2 credit surcharge.

Google News uses the same pricing as Google Search.

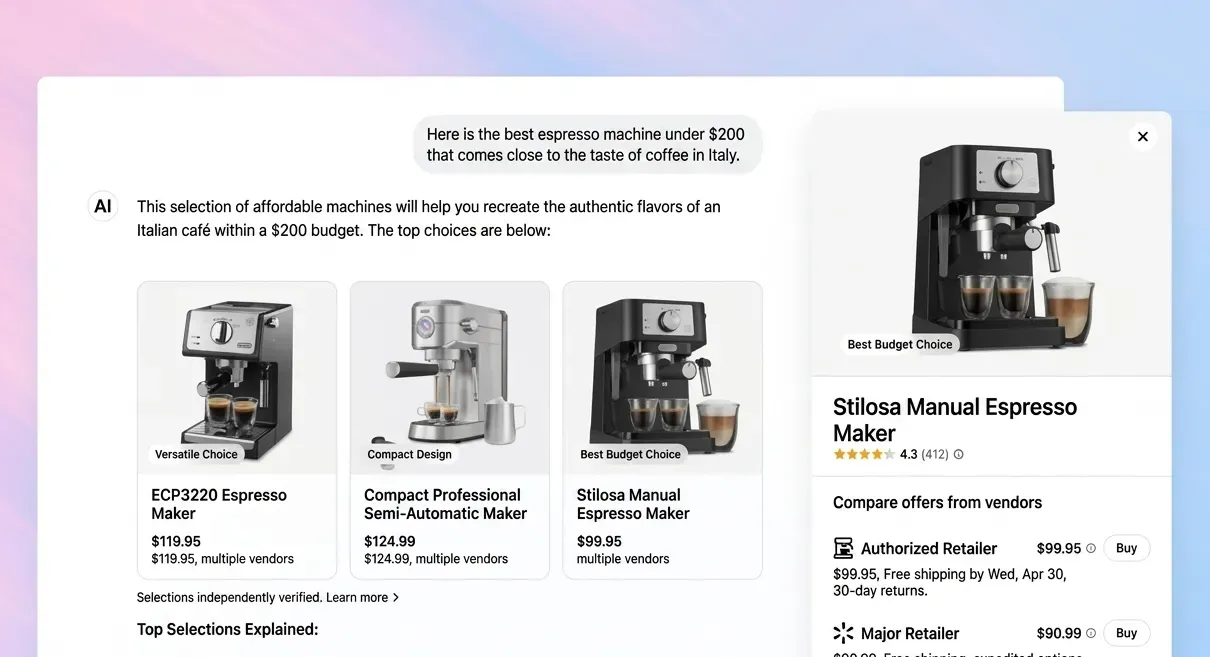

GEO is the discipline of structuring and publishing content so AI engines (ChatGPT, Perplexity, Gemini, AI Overview, AI Mode, Copilot) cite your domain when answering queries your buyers ask. The output is a citation, not a click; the measurement is citation rate, not ranking position; the unit being optimized is the passage, not the page.

"Generative Engine Optimization" was formalized by a 2023 academic paper (Aggarwal et al., "GEO: Generative Engine Optimization"). The term exists because the SEO playbook stops working when the answer is generated rather than ranked. The same authority signals matter, but the surface, the unit, and the measurement loop are different enough that practitioners needed a separate name. The discipline matured in 2024–2025 as ChatGPT, Perplexity, and AI Overview became real traffic-displacement channels.

GEO is one discipline inside the broader AI SEO program. AI SEO is the strategy and operating system (target prompt selection, measurement infrastructure, competitive analysis, cross-engine coordination). GEO is the on-page craft (passage structure, schema markup, citable formats, authority anchoring). Most teams run them together. See AI SEO for the program-level pillar.

Five measurable metrics, in priority order: (1) Citation rate per prompt: what fraction of runs cite your domain. (2) Citation position: where you appear in the cited-sources list. (3) Cross-engine coverage: how many of the seven engines cite you for a target prompt. (4) Share of voice: your citation rate vs each competitor on the same prompt set. (5) Entity recognition: whether engines correctly attribute claims to your brand vs misattribute or omit. All five come from sampling raw API responses.

Right, and that's the structural problem GEO measurement infrastructure has to solve. Engines don't expose dashboards or analytics APIs for who-got-cited. The measurement loop is: define a target prompt list, hit each engine's API on that list, parse `sources[]` from the response, classify by domain, aggregate over time. That sampling pipeline is the substrate every GEO program runs on. Build it yourself or use a hosted layer.

Take 100 target prompts × 3 countries × 4 priority engines × daily sampling = ~12,000 API calls/month per brand. That sits well inside cloro's Hobby plan ($100/month, 250k credits), covered ~20× over. Most GEO programs grow into Growth ($500/month) when they expand to all 7 engines and add a competitor-tracking prompt set. DIY at the same volume runs roughly $15–30k/month all-in once you account for anti-automation, parser maintenance, and ops.

Three steps. (1) Pick 50–200 target prompts: long-tail informational queries where buyers research solutions like yours. (2) Stand up the measurement loop: sample those prompts daily across the engines that matter, track citation rate over time. (3) Where you're not cited, study the cited domains and fix your passages. Iterate weekly. The measurement layer is the bottleneck; most teams skip it and end up "writing for AI" by feel. AI Visibility Tracking is the productized measurement product.

The complete guide to optimizing your content for AI search engines like ChatGPT, Perplexity, and Google AI Overviews.

GEO services compared — measurement platforms, specialist tools, and agency engagements. We tested 7 options on real generative engine optimization workflows. Honest breakdown.

GEO checklist — 12 specific on-page changes that move AI citation rate. Tested across multiple categories. Each change includes the why, the how, and the expected impact.

GEO without measurement is guesswork. AI Visibility Tracking is the productized measurement layer: same API, framed for buyers.