AI Crawlers explained: How bots are reading your site

Your website traffic logs are lying to you.

They show Googlebot indexing your pages. They show users from Chrome and Safari. But they might be missing the most aggressive new visitors on the web: AI Crawlers.

Unlike traditional search engine bots, which scan your site to rank it, AI crawlers scan your site to learn it. They are harvesting your content to train the next generation of Large Language Models (LLMs).

The decision you make today, to block them or welcome them, will shape your visibility in the AI era.

Table of contents

- Search bots vs AI crawlers

- The big list of AI user agents

- To block or not to block?

- How to control them

- Monitoring the invisible traffic

Search bots vs AI crawlers

For 20 years the deal was simple: you give Google your content, Google gives you traffic.

AI crawlers break that contract.

| Feature | Googlebot | GPTBot / ClaudeBot |

|---|---|---|

| Goal | Index links for search | Ingest text for training |

| Output | Blue link to your site | Synthesized answer |

| Traffic | Direct click-throughs | Often zero clicks |

| Value | SEO & Visibility | GEO & Brand Authority |

The friction. If an AI reads your article and learns everything in it, it can answer user questions without ever sending that user to your site. This is the “Zero-Click” future that AEO prepares us for.

The big list of AI user agents

There isn’t just one bot anymore. Here are the key agents to know in 2026.

1. GPTBot (OpenAI)

The big one. It crawls the web to train GPT-4 and GPT-5 models.

- User agent.

GPTBot - Impact. High. Blocking it removes your data from future model training.

2. ClaudeBot (Anthropic)

Aggressive and thorough. Used to train the Claude family of models.

- User agent.

ClaudeBot - Impact. High. Claude has large context windows, so it digests entire long-form articles in one pass.

3. Google-Extended

Google’s compromise. This token lets you block your content from training Gemini without de-indexing from Google Search.

- User agent.

Google-Extended - Note. This does not affect your SEO rankings.

4. PerplexityBot

Powering the “Answer Engine.” Unlike training bots, this one often fetches data live to answer user queries.

- User agent.

PerplexityBot - Impact. Immediate visibility in Perplexity search results.

To block or not to block?

This is a business decision as much as a technical one.

Block them if:

- Your content is your product (e.g., New York Times, paywalled research).

- You have sensitive IP and don’t want your proprietary code or data ending up in a public model.

- Server costs are high. AI bots can be aggressive and expensive to serve.

Allow them if:

- You want brand visibility, so ChatGPT knows who you are and recommends you.

- You are in B2B, where being cited as an authority in an AI answer is valuable social proof.

- You practice AI SEO and actively optimize content to be consumed by machines.

How to control them

You control these bots through your robots.txt file.

To block all major AI training bots:

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: Google-Extended

Disallow: /

User-agent: CCBot

Disallow: /To allow them but keep them out of admin areas:

User-agent: GPTBot

Allow: /

Disallow: /admin/

Disallow: /private/Note: implementing llms.txt is a proactive way to guide these bots to the right content, instead of just blocking them via robots.txt.

Monitoring the invisible traffic

Standard analytics tools like GA4 filter out “bot traffic” by default. You might be getting thousands of AI visits a day and never know it.

Why it matters. If GPTBot stops visiting your site, your fresh content isn’t making it into the model and you go stale to the AI.

The fix is specialized monitoring.

cloro helps you close the loop. Server logs tell you if the bot visited; cloro tells you if the model actually remembers you.

By tracking your brand mentions across LLMs, you can correlate robots.txt changes with your actual AI visibility.

Control who reads your site, and verify what they learn.

Frequently asked questions

How do I block AI crawlers from my site?+

You can block AI crawlers like GPTBot and ClaudeBot by adding specific Disallow rules to your robots.txt file. For example: `User-agent: GPTBot Disallow: /`.

Should I block AI crawlers?+

It depends on your strategy. If you want brand visibility in AI answers, allow them. If you have proprietary data or paywalled content you want to protect, block them.

What is the difference between Googlebot and GPTBot?+

Googlebot crawls to index your site for search links. GPTBot crawls to ingest your content for training AI models. Googlebot drives traffic; GPTBot primarily drives knowledge.

What is Google-Extended and should I block it?+

Google-Extended allows you to block your content from training Google's AI models (like Gemini) without affecting your SEO rankings. Whether to block it depends on your data and AI strategy.

How does `llms.txt` relate to AI crawlers?+

`llms.txt` is a proactive way to guide AI crawlers to clean, structured versions of your content, ensuring accurate ingestion and reducing token waste, rather than just blocking them.

Related reading

Google Search Operators, Syntax & Commands: The Complete 2026 Guide

Every Google search operator, command, and syntax shortcut in one guide. Exclude sites, target file types, find unlinked mentions, and combine operators for power searches.

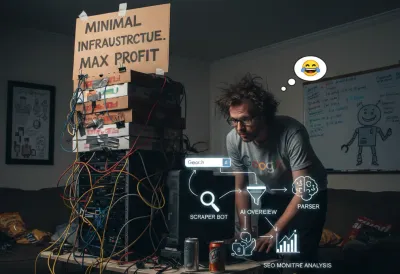

How to scrape Google Search results effortlessly

Complete technical guide to scraping Google Search results, parsing organic results, sponsored ads, AI Overview, and extracting structured data for SEO monitoring and competitive analysis.