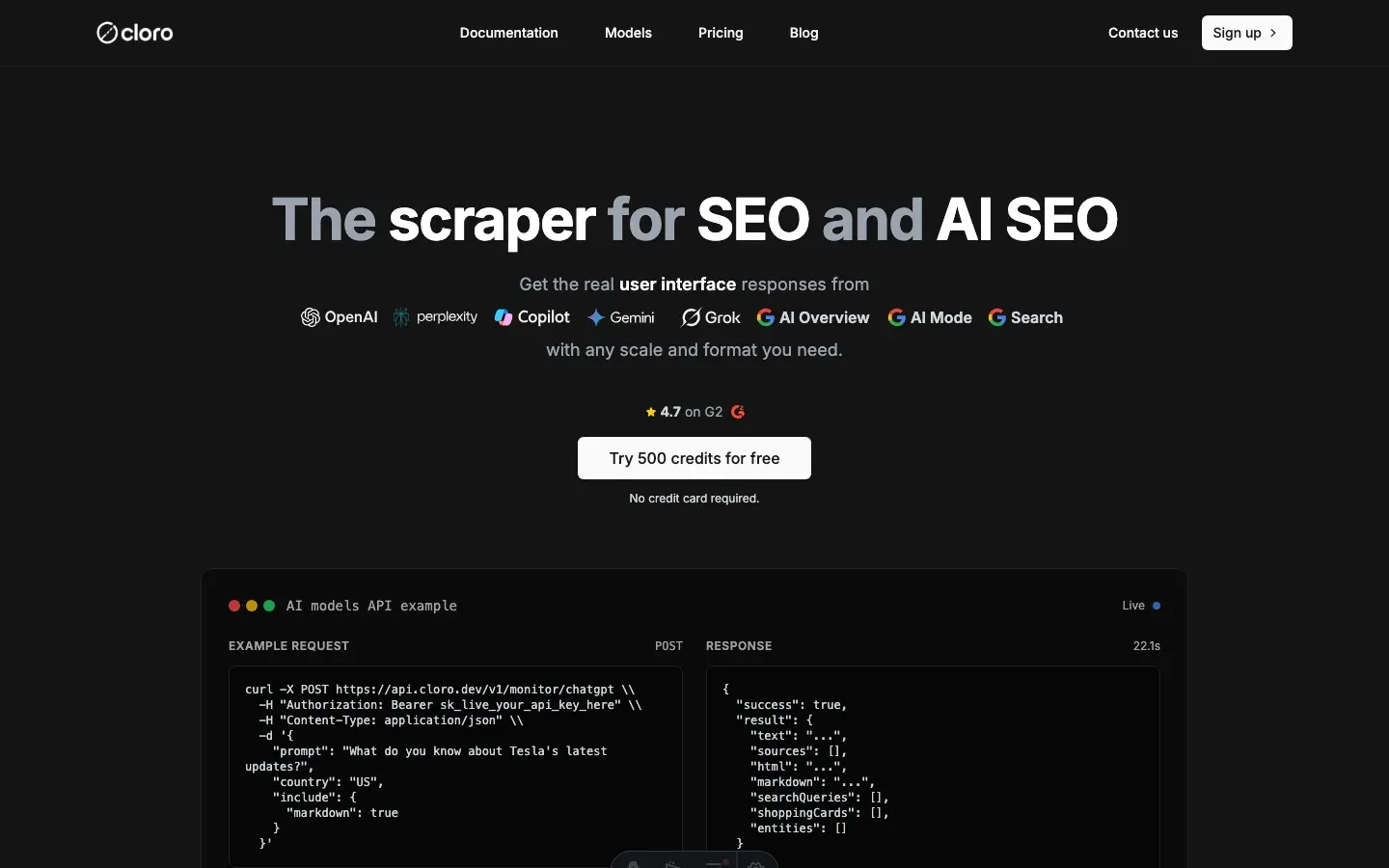

How to scrape Google Search results effortlessly

Google processes over 8.5 billion searches daily. The results page is more than blue links: organic results, sponsored ads, AI Overviews, People Also Ask, and related searches all carry structured data that traditional APIs don’t expose.

Google wasn’t built for programmatic access. It uses anti-bot systems, dynamic content rendering, and geolocation-based personalization that off-the-shelf scrapers don’t handle well.

We’ve parsed millions of Google Search pages. This guide walks through how to scrape Google Search and extract the structured data SEO teams and researchers actually need.

Table of contents

- Why scrape Google Search results?

- Understanding Google Search’s architecture

- The structured data extraction challenge

- Building the scraping infrastructure

- Parsing organic search results

- Extracting AI Overview and related features

- Handling geolocation and pagination

- Using cloro’s managed Google Search scraper

Why scrape Google Search results?

Google’s official API responses look nothing like what users see in the browser.

What you miss with the API:

- The actual search results users see

- AI Overview with source citations

- People Also Ask questions with related content

- Related searches for query expansion

- Location-based result personalization

Without scraping, monitoring rankings or understanding the real user experience isn’t possible. Scraping also tends to cost roughly 12x less than direct API usage at scale.

Common use cases:

- SEO monitoring. Track rankings across locations.

- Competitive analysis. Monitor competitor visibility and strategies.

- Market research. Analyze search trends and user intent.

- Brand monitoring. Track how your brand appears in search.

For more specific AI features, see our guide on scraping Google AI Overview.

Understanding Google Search’s architecture

Google Search runs through several layers that each complicate scraping.

Request flow

- Initial request. User searches via google.com.

- Geolocation routing. Results personalized based on UULE parameters.

- Dynamic rendering. JavaScript loads organic results, AI Overview, and related content.

- Anti-bot checks. Multiple layers of bot detection and CAPTCHA challenges.

Response structure

Google Search returns dense HTML with several data sections:

- Organic results. Traditional blue links with titles and snippets.

- Sponsored ads. Paid search advertisements with positioning data.

- AI Overview. AI-generated summaries with source citations.

- People Also Ask. Related questions with expandable answers.

- Related searches. Suggested query variations.

- Knowledge panels. Entity information and rich media.

Technical challenges

- Location-based results. UULE parameters for precise geotargeting.

- Dynamic content. JavaScript rendering for interactive features.

- Anti-bot detection. Canvas fingerprinting and behavioral analysis.

- CAPTCHA challenges. reCAPTCHA for suspicious activity. See our notes on how to solve CAPTCHAs.

The structured data extraction challenge

Google’s HTML is dense and the page loads content dynamically, which makes parsing hard. You’ll likely also hit IP bans that force you to rotate through proxies.

Multi-format data sources

Organic results. Traditional search results with position tracking.

# Desktop selector example

".A6K0A .vt6azd.asEBEc" # Main result container

# Mobile selector example

"[data-dsrp]" # Mobile result containerAI Overview. AI-generated content with source attribution.

# AI Overview container variations

"#m-x-content [data-container-id='main-col']" # Version 1

"#m-x-content [data-rl]" # Version 2Related features. People Also Ask and Related Searches.

# People Also Ask questions

"[jsname='Cpkphb']" # Question containers

# Related searches

".s75CSd" # Related search suggestionsDynamic selectors

Google’s HTML varies by:

- Device type. Desktop vs mobile layouts.

- Search type. Web, news, images, or shopping.

- Feature availability. AI Overview, knowledge panels.

- Location. Country-specific result formats.

Content rendering modes

HTTP fetch mode. Direct HTTP requests for basic organic results.

- Faster and cheaper on resources

- Limited to static HTML content

- More likely to trip bot detection

Browser rendering mode. Full browser automation for dynamic content.

- Supports AI Overview and interactive features

- Handles JavaScript-heavy content

- Higher resource usage, but more complete

Building the scraping infrastructure

The pieces below cover what a reliable Google Search scraper needs.

Core components

import asyncio

import uule_grabber

from playwright.async_api import Page, Browser

from services.cookie_stash import cookie_stash

from services.page_interceptor import PlaywrightInterceptor

from services.captchas.solve import solve_captcha

from bs4 import BeautifulSoup

GOOGLE_COM_URL = "https://www.google.com/search"

ACCEPT_COOKIES_LOCATOR = "#L2AGLb"

MIN_HEAD_CHARS = 500Request configuration

class GoogleRequest(TypedDict):

prompt: str # Search query

city: Optional[str] # Location targeting

country: str # Country code

pages: int # Number of result pages

device: Literal["desktop", "mobile"] # Device type

include: Dict[str, bool] # Content optionsSession management

# Cookie persistence for better success rates

existing_cookies = await cookie_stash.get_cookies(

proxy.ip, GOOGLE_COM_URL, device_type=device_type

)

if existing_cookies:

# Remove UULE cookie to avoid conflicts

filtered_cookies = [

c for c in existing_cookies if c.get("name") != "UULE"

]

await page.context.add_cookies(filtered_cookies)Geolocation support

# UULE parameter for precise location targeting

uule = None

if city:

uule = uule_grabber.uule(city)

# Build search URL with location parameters

search_url = build_url_with_params(

GOOGLE_COM_URL,

{

"q": prompt,

"hl": google_params["hl"], # Language

"gl": google_params["gl"] if not uule else None, # Country

"uule": (uule, False), # Location

},

)Parsing organic search results

Organic results need careful parsing because the desktop and mobile layouts differ.

Desktop vs mobile selectors

SELECTORS = {

"desktop": {

"item": ".A6K0A .vt6azd.asEBEc",

"title": "h3",

"link": "a",

"displayed_link": "cite",

"snippet": "div.VwiC3b",

},

"mobile": {

"item": "[data-dsrp]",

"title": ".GkAmnd",

"link": "a[role='presentation']",

"displayed_link": ".nC62wb",

"snippet": "div.VwiC3b",

},

}Organic result parsing

def parse_organic_results(

html: str,

current_page: int,

device_type: Literal["desktop", "mobile"],

init_position: int,

) -> List[OrganicResult]:

"""Parse organic search results from Google HTML."""

organic_results = []

position = init_position

soup = BeautifulSoup(html, "html.parser")

selectors = SELECTORS[device_type]

elements = soup.select(selectors["item"])

for elem in elements:

# Extract title

title_element = elem.select_one(selectors["title"])

if not title_element:

continue

title = title_element.get_text(strip=True)

# Extract link

link_element = elem.select_one(selectors["link"])

if not link_element:

continue

link = link_element.get("href")

# Extract displayed link

displayed_link_element = elem.select_one(selectors["displayed_link"])

displayed_link = (

displayed_link_element.get_text(strip=True)

if displayed_link_element

else ""

)

# Extract snippet

snippet = ""

snippet_element = elem.select_one(selectors["snippet"])

if snippet_element:

snippet = snippet_element.get_text(strip=True)

result: OrganicResult = {

"position": position,

"title": title,

"link": link,

"displayedLink": displayed_link.replace(" › ", " > "),

"snippet": snippet,

"page": current_page,

}

organic_results.append(result)

position += 1

return organic_resultsExtracting AI Overview and related features

AI Overview loads dynamically and ships in several layout versions, so detection has to be defensive.

AI Overview detection and parsing

from typing import List

from playwright.async_api import Locator, Page

# AI Overview container locators

SV6KPE_LOCATOR = "#m-x-content [data-container-id='main-col']"

NON_SV6KPE_LOCATOR = "#m-x-content [data-rl]"

MAIN_COL_LOCATOR = f"{SV6KPE_LOCATOR}, {NON_SV6KPE_LOCATOR}"

async def wait_for_ai_overview(page: Page) -> str:

"""Wait for AI Overview to load and return the selector found."""

try:

# Wait for either AI Overview version

await page.wait_for_selector(

MAIN_COL_LOCATOR, timeout=10_000

)

# Check which version loaded

if await page.locator(SV6KPE_LOCATOR).count() > 0:

return SV6KPE_LOCATOR

else:

return NON_SV6KPE_LOCATOR

except Exception:

return ""AI Overview source extraction

async def extract_aioverview_sources(page: Page) -> List[LinkData]:

"""Extract sources from AI Overview."""

sources = []

seen_urls = set()

position = 1

# AI Overview sources selector

AI_OVERVIEW_SOURCES_LOCATOR = "#m-x-content ul > li > a, #m-x-content ul > li > div > a"

aioverview_sources = await page.locator(AI_OVERVIEW_SOURCES_LOCATOR).all()

for source_elem in aioverview_sources:

url = await source_elem.get_attribute("href")

label = await source_elem.get_attribute("aria-label")

if url and label and url not in seen_urls:

description = await _extract_aioverview_source_description(source_elem)

source = LinkData(

position=position,

label=label,

url=url,

description=description or "",

)

sources.append(source)

seen_urls.add(url)

position += 1

return sources

async def _extract_aioverview_source_description(element: Locator) -> str | None:

"""Extract description for AI Overview source."""

try:

parent = element.locator("xpath=..")

description_div = parent.locator(".gxZfx").first

return await description_div.inner_text(timeout=1000)

except Exception:

pass

return NonePeople Also Ask and Related searches

def parse_people_also_ask(html: str) -> List[PeopleAlsoAskResult]:

"""Parse People Also Ask section from Google HTML."""

people_also_ask = []

soup = BeautifulSoup(html, "html.parser")

# People Also Ask questions

question_elements = soup.select("[jsname='Cpkphb']")

for elem in question_elements:

question = elem.get_text(strip=True)

if question:

result: PeopleAlsoAskResult = {

"question": question,

"type": "UNKNOWN", # Could be expanded with link detection

}

people_also_ask.append(result)

return people_also_ask

def parse_related_searches(

html: str,

search_url: str,

device_type: Literal["desktop", "mobile"],

) -> List[RelatedSearchResult]:

"""Parse related searches from Google HTML."""

related_searches = []

soup = BeautifulSoup(html, "html.parser")

# Related searches selector

related_elements = soup.select(".s75CSd")

for elem in related_elements:

query = elem.get_text(strip=True)

if query:

result: RelatedSearchResult = {

"query": query,

"link": None, # Could be constructed from query

}

related_searches.append(result)

return related_searchesHandling geolocation and pagination

Results shift heavily by location, and pagination needs its own handling.

Geolocation targeting

import uule_grabber

def get_location_parameters(city: str, country: str) -> Dict[str, str]:

"""Get location-specific parameters for Google Search."""

params = {

"hl": "en", # Default language

"gl": country.upper(), # Country code

}

# Add UULE for city-level targeting

if city:

uule = uule_grabber.uule(city)

if uule:

params["uule"] = uule

# Remove gl when using UULE for precision

del params["gl"]

return paramsMulti-page support

async def scrape_multiple_pages(

page: Page,

prompt: str,

n_pages: int,

google_params: Dict[str, str],

uule: Optional[str],

device_type: str,

) -> Tuple[List[OrganicResult], List[str]]:

"""Scrape multiple pages of Google Search results."""

organic_results = []

html_pages = []

for current_page in range(1, n_pages + 1):

if current_page == 1:

# First page - use existing page content

html = await page.content()

else:

# Build next page URL

next_page_params = {

**google_params,

"q": prompt,

"start": (current_page - 1) * 10, # Google uses 0-indexed

}

if uule:

next_page_params["uule"] = uule

next_page_url = build_url_with_params(

GOOGLE_COM_URL, next_page_params

)

# Fetch next page

response = await page.context.request.fetch(next_page_url)

html = await response.text()

# Parse organic results for current page

page_results = parse_organic_results(

html,

current_page=current_page,

device_type=device_type,

init_position=len(organic_results) + 1,

)

organic_results.extend(page_results)

html_pages.append(html)

return organic_results, html_pagesUsing cloro’s managed Google Search scraper

Building and maintaining a reliable Google Search scraper takes real engineering investment.

Infrastructure requirements

Anti-bot evasion:

- Browser fingerprinting rotation

- CAPTCHA solving services

- Proxy pool management

- Rate limiting and backoff strategies

Performance:

- Geographic proxy distribution

- Cookie persistence systems

- Parallel request processing

- Error handling and retry logic

Maintenance overhead:

- Continuous selector updates

- Anti-bot measure adaptation

- Performance monitoring

- Compliance management

Managed solution API

import requests

# Simple API call - no browser management needed

response = requests.post(

"https://api.cloro.dev/v1/monitor/google-search",

headers={

"Authorization": "Bearer sk_live_your_api_key",

"Content-Type": "application/json"

},

json={

"query": "best coffee shops",

"country": "US",

"pages": 3,

"include": {

"html": True,

"aioverview": True

}

}

)

result = response.json()

print(f"Found {len(result['result']['organicResults'])} organic results")

print(f"AI Overview: {'Yes' if result['result'].get('aioverview') else 'No'}")Response structure

{

"success": true,

"result": {

"sponsoredResults": [

{

"position": 1,

"title": "Best Coffee NYC - Order Online",

"link": "https://example-coffee.com",

"displayedLink": "example-coffee.com",

"snippet": "Premium coffee delivered. Fast shipping...",

"page": 1

}

],

"organicResults": [

{

"position": 1,

"title": "Best Coffee Shops in NYC 2024",

"link": "https://example.com/coffee-shops",

"displayedLink": "example.com",

"snippet": "Guide to New York's best coffee shops...",

"page": 1

}

],

"peopleAlsoAsk": [

{

"question": "What is the most famous coffee shop in NYC?",

"type": "LINK",

"title": "Iconic NYC Coffee Shops",

"link": "https://example.com/iconic-coffee"

}

],

"relatedSearches": [

{

"query": "best coffee shops brooklyn",

"link": "https://google.com/search?q=best+coffee+shops+brooklyn"

}

],

"aioverview": {

"text": "New York City has a vibrant coffee culture...",

"sources": [

{

"position": 1,

"label": "NYC Coffee Guide 2024",

"url": "https://example.com/nyc-coffee",

"description": "Comprehensive guide to NYC coffee scene"

}

]

}

}

}Benefits

- P50 latency under 8s, versus minutes per query for manual scraping

- No infrastructure costs. We handle browsers, proxies, and maintenance.

- Structured data. Automatic parsing of organic results, AI Overview, and related features.

- Compliance. Ethical scraping practices and rate limiting.

- Scalability. Thousands of requests without tripping Google’s defenses.

For SEO teams tracking rankings, businesses watching competitors, or researchers analyzing search trends, structured Google Search data is hard to replace.

For most developers and businesses, we recommend cloro’s Google Search scraper. You get:

- Reliable scraping infrastructure out of the box

- Automatic data parsing and structuring

- Built-in anti-bot evasion and rate limiting

- Error handling and retries

- Structured JSON output with all metadata

- Geolocation targeting and multi-page support

Building and maintaining this yourself usually runs $5,000-10,000/month between development time, browser instances, proxy services, and ongoing fixes.

If you need a custom system, the code above is a reasonable starting point. Plan for ongoing maintenance, since Google updates its anti-bot measures and result page layouts often.

If you want to skip the infrastructure work, get started with cloro’s API.

Frequently asked questions

How do I handle Google CAPTCHAs?+

You can't easily solve them yourself at scale. Use a CAPTCHA solving service or, better yet, avoid them by rotating high-quality residential proxies.

What is the best library for scraping Google?+

For organic results, raw HTTP requests with smart headers are fastest. For AI features, Playwright is essential.

How many pages can I scrape before getting blocked?+

Without proxies, maybe 5-10. With a good proxy network, millions. It's entirely dependent on your IP reputation.

Why is geolocation important for Google Search scraping?+

Google personalizes search results heavily based on the user's physical location. To get accurate, unbiased data for specific regions, you need to simulate searches from those precise locations using `uule` parameters and proxies.

What's the difference between HTTP fetch and browser rendering for Google?+

HTTP fetch is faster but only gets static HTML, missing dynamic elements like AI Overviews. Browser rendering (Playwright/Selenium) executes JavaScript to get the full page, but is slower and more resource-intensive.

Related reading

Google Search Operators, Syntax & Commands: The Complete 2026 Guide

Every Google search operator, command, and syntax shortcut in one guide. Exclude sites, target file types, find unlinked mentions, and combine operators for power searches.

Google search parameters: the complete guide

Stop searching like a novice. Master the hidden URL parameters like uule, gl, and udm to control location, language, and AI features.

How to scrape Google Gemini: parse internal API confidence scores

Scrape Google Gemini in 2026: intercept the internal API protocol, extract confidence-scored structured outputs, and handle Google account session checks.