How to scrape Microsoft Copilot: WebSocket events + auth sessions

Microsoft Copilot is the only mainstream AI search product that streams its answer over a WebSocket instead of Server-Sent Events. Frame events arrive as discrete {event: "appendText"} and {event: "citation"} JSON messages on a long-lived connection to copilot.microsoft.com/c/api/chat, terminated by a single {event: "done"}. There is no SSE chunk parser to reuse from your ChatGPT/Perplexity stack.

That difference matters in two places: the network interceptor (Playwright’s page.on('websocket') instead of page.on('response')) and the citation grouping (Copilot bunches multiple citation events between appendText events into pills, which need post-processing into a flat sources array). Layer on Microsoft account session cookies — Copilot drops the session cookie aggressively if it sees datacenter fingerprints — and you have a stack with effectively no overlap with the SSE engines.

After parsing 3M+ Copilot WebSocket frames at cloro, here’s the protocol map and the cookie-stash strategy that keeps sessions alive.

Table of contents

- Why scrape Microsoft Copilot responses?

- Understanding Copilot’s WebSocket architecture

- The WebSocket event parsing challenge

- Building the scraping infrastructure

- Parsing streaming text and citations

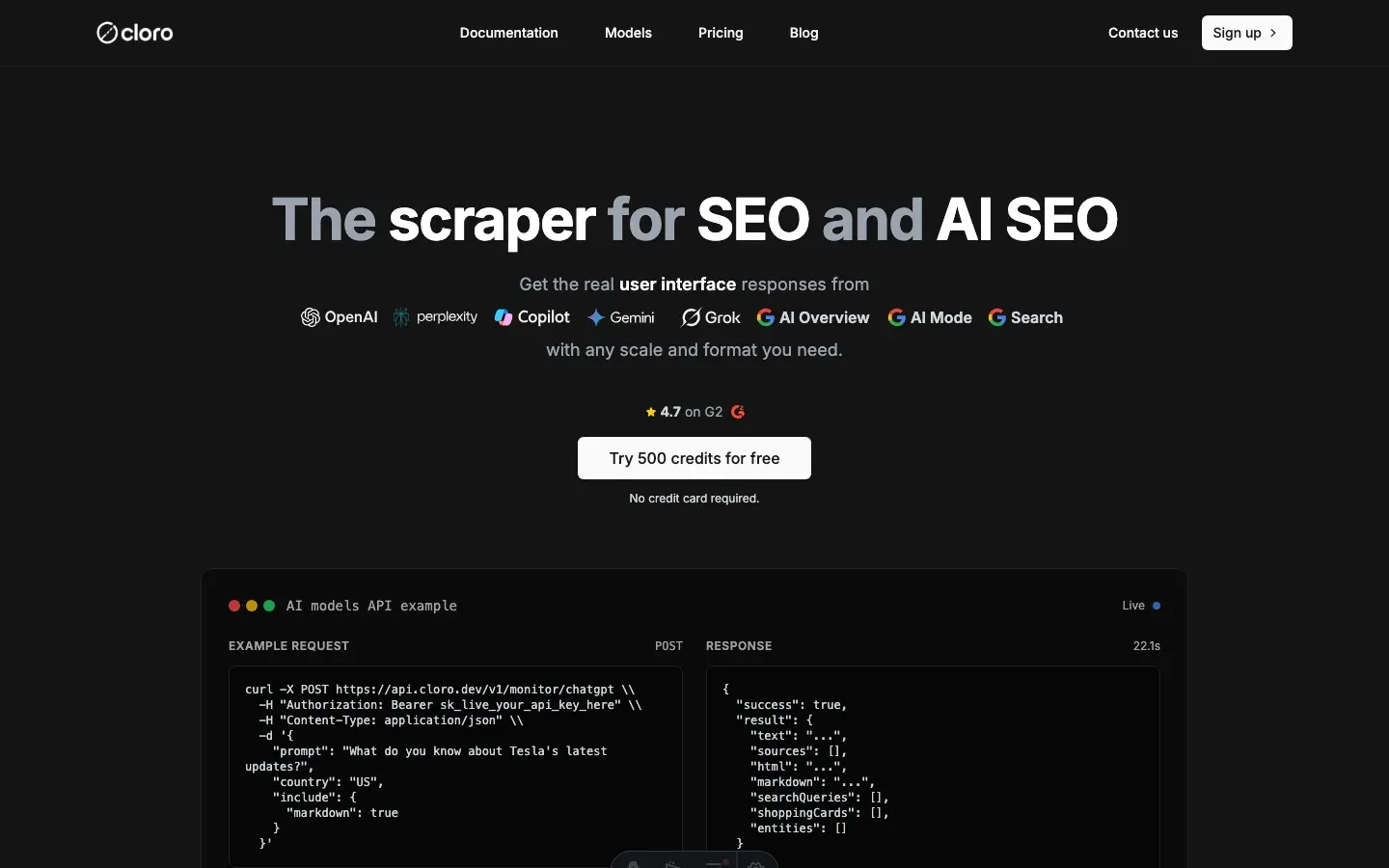

- Using cloro’s managed Copilot scraper

Why scrape Microsoft Copilot responses?

Copilot’s value is its Microsoft-ecosystem citation bias. Run the same prompt across ChatGPT, Perplexity, and Copilot, and Copilot consistently surfaces docs.microsoft.com, learn.microsoft.com, and support.microsoft.com as primary citations on enterprise IT and developer-tooling queries. For a brand monitoring setup focused on Microsoft 365 / Azure / Power Platform, Copilot is the engine where your docs should be cited — and the only way to verify that is the WebSocket frame stream.

What only the WebSocket frames expose:

- Inline citation pills — Copilot groups consecutive

citationevents between text into pills. The pill grouping is what powers the[1][2]markers in the rendered answer; the official Bing API doesn’t expose pill structure. - Microsoft-source attribution rate —

citation.urllets you compute “what % of citations point at *.microsoft.com domains” for a given prompt, which is the cleanest signal of how well your docs cover a query. - Citation density — citations-per-text-event ratio is a proxy for how grounded a Copilot answer is; useful for content strategy on technical topics.

- Mode-specific behavior — Copilot has Quick Chat and Smart (Think Deeper) modes accessible via different keyboard paths; each emits different event volumes and citation patterns.

Use cases the WebSocket stream supports:

- Microsoft-doc citation tracking. “Is our

learn.microsoft.comPR-merged article showing up in Copilot’s pills for the target query yet?” - Internal-vs-external citation balance. “What ratio of microsoft.com-vs-third-party citations does Copilot use for our product category?”

- Mode comparison. “Does Smart mode cite our docs at a higher rate than Quick mode?”

- Enterprise compliance auditing. “Capture the full Copilot answer + sources for every prompt our support agents send, with timestamps.”

For a similar guide on a different protocol, see how to scrape ChatGPT (SSE-based).

Understanding Copilot’s WebSocket architecture

Copilot uses a real-time communication system based on WebSocket events.

Response generation flow:

- Page navigation. Load the Copilot web interface.

- WebSocket connection. Intercept WebSocket messages from the chat endpoint.

- Event collection. Capture all JSON events sent via WebSocket.

- Response parsing. Process collected events once completion is detected.

- Microsoft knowledge. Draws on Microsoft product and service data.

WebSocket event interception:

// Copilot sends events via WebSocket from:

// copilot.microsoft.com/c/api/chat

// Events are simple JSON objects:

{

"event": "appendText",

"text": "To improve team productivity..."

}

{

"event": "citation",

"title": "Microsoft 365 Documentation",

"url": "https://docs.microsoft.com/..."

}

{

"event": "done"

}Event types to handle:

appendText: text content chunkscitation: source citations embedded inlinedone: completion marker

The WebSocket event parsing challenge

Copilot scraping comes down to parsing real-time WebSocket events and reconstructing a structured response.

WebSocket event stream:

# Raw Copilot WebSocket events example

{

"event": "appendText",

"text": "To improve team productivity using Microsoft 365"

}

{

"event": "citation",

"title": "Microsoft 365 Documentation",

"url": "https://docs.microsoft.com/en-us/microsoft-365/"

}

{

"event": "appendText",

"text": ", I recommend implementing SharePoint for document collaboration"

}

{

"event": "done"

}Parsing challenges:

- Real-time streaming. Content arrives via WebSocket events, not HTTP responses.

- Mixed event types. Text and citation events are interleaved.

- Citation pill grouping. Multiple citations can be grouped together.

- Event ordering. Citation positions need to be tracked accurately.

Python WebSocket parsing implementation:

import json

from typing import List, Dict, Any

class CopilotWebSocketParser:

def __init__(self):

self.text_parts = []

self.citation_pills: List[List[Dict[str, Any]]] = []

self.current_pill: List[Dict[str, Any]] = []

self.citation_position = 1

self.last_event_was_citation = False

self.is_complete = False

def parse_websocket_events(self, events: List[Dict[str, Any]]) -> Dict[str, Any]:

"""

Parse Copilot WebSocket events into structured response.

"""

for event in events:

event_type = event.get("event")

# Collect text chunks

if event_type == "appendText":

text_chunk = event.get("text", "")

self.text_parts.append(text_chunk)

# If we were building a citation pill and now see text, save the pill

if self.last_event_was_citation and self.current_pill:

self.citation_pills.append(self.current_pill)

self.current_pill = []

self.last_event_was_citation = False

# Collect citations

elif event_type == "citation":

citation_data = {

"position": self.citation_position,

"label": event.get("title", ""),

"url": event.get("url", ""),

"description": None

}

self.current_pill.append(citation_data)

self.citation_position += 1

self.last_event_was_citation = True

# Check for completion

elif event_type == "done":

self.is_complete = True

break

# Don't forget to add the last citation pill if it exists

if self.current_pill:

self.citation_pills.append(self.current_pill)

# Combine all text parts

full_text = "".join(self.text_parts)

# Flatten citation pills into unique sources

sources = self.flatten_citation_pills()

return {

"text": full_text,

"sources": sources,

"is_complete": self.is_complete

}

def flatten_citation_pills(self) -> List[Dict[str, Any]]:

"""

Flatten grouped citation pills into unique sources with corrected positions.

"""

seen_urls = set()

sources: List[Dict[str, Any]] = []

position = 1

for pill in self.citation_pills:

for citation_data in pill:

url = citation_data["url"]

if url not in seen_urls:

seen_urls.add(url)

sources.append({

"position": position,

"label": citation_data["label"],

"url": url,

"description": citation_data["description"]

})

position += 1

return sourcesCitation pill grouping logic:

def group_consecutive_citations(events: List[Dict[str, Any]]) -> List[List[Dict[str, Any]]]:

"""

Group consecutive citation events into citation pills.

Copilot groups multiple citations that appear together.

"""

citation_pills = []

current_pill = []

last_was_citation = False

for event in events:

if event.get("event") == "citation":

citation_data = {

"position": len(current_pill) + 1,

"label": event.get("title", ""),

"url": event.get("url", ""),

"description": None

}

current_pill.append(citation_data)

last_was_citation = True

elif event.get("event") == "appendText" and last_was_citation:

# Text event after citations means the pill is complete

if current_pill:

citation_pills.append(current_pill)

current_pill = []

last_was_citation = False

# Add the final pill if it exists

if current_pill:

citation_pills.append(current_pill)

return citation_pillsBuilding the scraping infrastructure

Copilot’s stack is what happens when an SSE-trained scraper team meets a WebSocket-only product. None of the SSE primitives (chunk parsers, data: line splitters, [DONE] sentinel detectors) carry over. The component list below is what specifically defeats Copilot’s WebSocket + Microsoft-auth combination.

Required components for Copilot specifically:

page.on("websocket")interceptor with frame handler. Playwright’spage.on("response")does nothing here — Copilot’s chat traffic is exclusively WebSocket. You hook theframereceivedevent and parse each frame as JSON.- Cookie stash keyed on

(proxy_ip, copilot.microsoft.com). Microsoft drops the session cookie within minutes on a fresh IP. Stashing the cookie set per IP lets the next request on that IP skip the auth dance entirely. - Citation-pill grouping state machine. The grouping rule is “consecutive

citationevents form one pill, terminated by the nextappendTextordone”. Without this, sources come out as a flat list and the[1][2]inline markers in the answer don’t line up. - Mode-selection keyboard navigation. Copilot’s UI doesn’t expose a stable mode-selector selector — the team uses keyboard shortcuts (

Tab,Tab,Enter) to land on Quick or Smart mode. Selector-based navigation breaks within days; keyboard-based survives months. event: "done"completion detector. Unlike SSE’s[DONE]sentinel that arrives in-band, Copilot’s done event is a discrete JSON frame; check for it inside the frame handler, not in the response body.

Complete scraper implementation:

import asyncio

import json

from playwright.async_api import async_playwright, Page

from typing import Dict, Any, List, Optional

class MicrosoftCopilotScraper:

def __init__(self):

self.copilot_events: List[Dict[str, Any]] = []

self.received_done_event = False

async def setup_websocket_interceptor(self, page: Page):

"""Set up WebSocket event interception."""

def on_websocket(ws):

# Intercept Copilot chat WebSocket

if "copilot.microsoft.com/c/api/chat" in ws.url:

ws.on("framereceived", self.websocket_message_handler)

page.on("websocket", on_websocket)

def websocket_message_handler(self, message: Union[str, bytes]):

"""Handle incoming WebSocket messages."""

parsed = json.loads(message)

if parsed.get("event") == "done":

self.received_done_event = True

self.copilot_events.append(parsed)

async def scrape_copilot(self, query: str, country: str = 'US') -> Dict[str, Any]:

"""Main scraping function."""

async with async_playwright() as p:

browser = await p.chromium.launch(headless=False)

context = await browser.new_context()

page = await context.new_page()

# Set up WebSocket interception

await self.setup_websocket_interceptor(page)

try:

# Navigate to Copilot

await page.goto('https://copilot.microsoft.com/', timeout=20_000)

# Handle landing page and mode selection

await self.handle_copilot_landing(page)

# Fill and submit query

await page.wait_for_selector("#userInput", state="visible", timeout=10_000)

await page.fill("#userInput", query)

await page.keyboard.press("Enter")

# Wait for response completion

await self.wait_for_copilot_response(page)

# Parse the captured events

parser = CopilotWebSocketParser()

result = parser.parse_websocket_events(self.copilot_events)

# Extract additional data if needed

if result.get("text"):

# Get HTML content for markdown conversion if needed

html_content = await self.extract_html_content(page)

result["html_content"] = html_content

return result

finally:

await browser.close()

async def handle_copilot_landing(self, page: Page):

"""Handle Copilot landing page and mode selection."""

# Wait for mode selection buttons

await page.wait_for_selector(

"[data-testid='composer-chat-mode-quick-button'], [data-testid='composer-chat-mode-smart-button']",

timeout=5_000

)

# Navigate to chat mode using keyboard shortcuts (matching actual code)

for _ in range(2):

await page.keyboard.press("Tab")

await asyncio.sleep(0.1)

await page.keyboard.press("Enter")

await asyncio.sleep(1)

# Additional navigation to input field

for _ in range(5):

await page.keyboard.press("Tab")

await asyncio.sleep(0.1)

await page.keyboard.press("Enter")

await asyncio.sleep(0.5)

async def wait_for_copilot_response(self, page: Page, timeout: int = 60):

"""Wait for Copilot response completion."""

for _ in range(timeout * 2): # Check every 500ms

await self.solve_captcha_if_needed(page)

# If response got captured, we can return

if self.received_done_event:

break

await asyncio.sleep(0.5)

else:

raise Exception("Never received Copilot response after 60 seconds")

async def solve_captcha_if_needed(self, page: Page):

"""Handle captcha challenges if encountered."""

# Simplified captcha handling

try:

# This would integrate with your captcha solving service

pass

except Exception:

pass

async def extract_html_content(self, page: Page) -> str:

"""Extract HTML content from Copilot response."""

try:

# Get the AI message content

html_content = await page.locator(

"[class*='group/ai-message-item']"

).first.inner_html(timeout=2_000)

return html_content or ""

except Exception:

return ""Cookie management for session persistence:

# Simple cookie management based on actual implementation

from typing import List, Dict

class CookieStash:

"""Manage cookies for persistent sessions across scrapes."""

def __init__(self):

self.cookies_cache = {}

async def save_cookies(self, proxy_ip: str, domain: str, cookies: List[Dict]):

"""Save cookies for reuse."""

cache_key = f"{proxy_ip}:{domain}"

self.cookies_cache[cache_key] = cookies

async def get_cookies(self, proxy_ip: str, domain: str) -> Optional[List[Dict]]:

"""Retrieve cached cookies."""

cache_key = f"{proxy_ip}:{domain}"

return self.cookies_cache.get(cache_key)

# Usage in scraper (matching actual code)

cookie_stash = CookieStash()

# Load existing cookies before navigation

existing_cookies = await cookie_stash.get_cookies(proxy.ip, "https://copilot.microsoft.com/")

if existing_cookies:

try:

await page.context.add_cookies(existing_cookies)

except Exception as e:

print(f"Failed to load cached cookies: {e}")

# Save cookies after successful session

cookies = await page.context.cookies()

await cookie_stash.save_cookies(proxy.ip, "https://copilot.microsoft.com/", cookies)Parsing streaming text and citations

Copilot’s mixed WebSocket events need careful parsing to reconstruct the full response.

Markdown conversion with citations:

import html2text

from bs4 import BeautifulSoup

import re

def convert_html_to_markdown_with_links(

html_content: str, citation_pills: List[List[Dict[str, Any]]]

) -> str:

"""

Convert Copilot HTML to markdown, replacing citation buttons with proper links.

"""

if not html_content:

return ""

# Parse HTML

soup = BeautifulSoup(html_content, "html.parser")

# Remove unwanted elements

reactions_div = soup.find(attrs={"data-testid": "message-item-reactions"})

if reactions_div:

reactions_div.decompose()

citation_cards = soup.find(attrs={"data-testid": "citation-cards-row"})

if citation_cards:

citation_cards.decompose()

# Find all citation buttons (rounded-md class)

buttons = soup.find_all("button", {"class": "rounded-md"})

button_index = 0

pill_index = 0

# Replace each citation button with actual links

while button_index < len(buttons) and pill_index < len(citation_pills):

pill_links = citation_pills[pill_index]

button = buttons[button_index]

# Create anchor elements for each link in the pill

new_anchors = []

for link_data in pill_links:

source_text = link_data.get("label")

url = link_data.get("url")

new_anchor = soup.new_tag("a", href=url)

new_anchor.string = source_text

new_anchors.append(new_anchor)

# Insert all anchors after the button and remove the button

for anchor in reversed(new_anchors):

button.insert_after(anchor)

button.decompose()

button_index += 1

pill_index += 1

# Convert to markdown

h = html2text.HTML2Text()

h.ignore_links = False

h.ignore_images = False

h.body_width = 0

h.unicode_snob = True

h.skip_internal_links = False

markdown = h.handle(str(soup))

# Clean up whitespace

markdown = re.sub(r"\n\s*\n\s*\n", "\n\n", markdown)

markdown = markdown.replace("\\n\\n", "\n\n")

markdown = markdown.replace("\\n", "\n")

return markdown.strip()Citation analysis:

def analyze_citation_patterns(events: List[Dict[str, Any]]) -> Dict[str, Any]:

"""

Analyze citation patterns in Copilot responses for insights.

"""

citations = []

text_events = []

for event in events:

if event.get("event") == "citation":

citations.append({

"title": event.get("title", ""),

"url": event.get("url", ""),

"position": len(citations) + 1

})

elif event.get("event") == "appendText":

text_events.append(event.get("text", ""))

return {

"total_citations": len(citations),

"citation_density": len(citations) / len(text_events) if text_events else 0,

"average_text_between_citations": len("".join(text_events)) / len(citations) if citations else 0,

"microsoft_sources": len([c for c in citations if "microsoft.com" in c.get("url", "")]),

"external_sources": len([c for c in citations if "microsoft.com" not in c.get("url", "")])

}

def extract_microsoft_knowledge_focus(text: str) -> Dict[str, Any]:

"""

Analyze text to identify Microsoft ecosystem focus areas.

"""

microsoft_products = [

"Microsoft 365", "Office 365", "SharePoint", "Teams", "Outlook",

"Azure", "Visual Studio", "Power Platform", "Power BI", "Power Apps",

"Windows", "Active Directory", "Exchange", "OneDrive"

]

product_mentions = {}

for product in microsoft_products:

count = text.lower().count(product.lower())

if count > 0:

product_mentions[product] = count

return {

"total_product_mentions": sum(product_mentions.values()),

"mentioned_products": product_mentions,

"has_microsoft_focus": len(product_mentions) > 0,

"primary_products": sorted(product_mentions.items(), key=lambda x: x[1], reverse=True)[:3]

}Using cloro’s managed Copilot scraper

The maintenance cost on Copilot is dominated by Microsoft session-cookie expiry, not protocol parsing. The appendText/citation/done frame shapes are stable; the cookie expiration window is measured in minutes and changes without notice. cloro’s /v1/monitor/copilot endpoint runs the cookie-stash + IP-rotation pool that keeps sessions alive across batches.

API integration:

import requests

import json

# Your Microsoft ecosystem query

query = "How can I improve team productivity using Microsoft 365 tools?"

# API request to cloro

response = requests.post(

'https://api.cloro.dev/v1/monitor/copilot',

headers={'Authorization': 'Bearer YOUR_API_KEY'},

json={

'prompt': query,

'country': 'US',

'include': {

'markdown': True,

'html': True

}

}

)

result = response.json()

print(json.dumps(result, indent=2))What /v1/monitor/copilot handles for you:

- Cookie stash + IP rotation. Sessions are pinned to IP, refreshed before expiry, rotated on detection.

- Frame-handler completion detection. No timeout-based polling — the response returns the moment the

doneframe lands. - Citation-pill grouping done correctly. The

[1][2]inline markers in the answer body line up with positions in thesources[]array; no off-by-one drift when Copilot bunches three citations together. - Microsoft-domain attribution rate. Returns a

microsoft_source_pctfield per response — useful for tracking ecosystem citation share over time. - Mode-aware navigation. Quick vs Smart mode selectable via parameter; the keyboard-shortcut walk is handled internally.

- Markdown reconstruction with linked citations. The HTML+citation pill markup is converted into clean markdown with inline anchor links (not just

[1][2]references) — ready to feed into downstream LLM analysis.

Structured output:

{

"status": "success",

"result": {

"text": "To improve team productivity using Microsoft 365 tools, I recommend implementing the following strategies: utilize SharePoint for document collaboration, leverage Teams for communication, use Power Automate for workflow automation...",

"sources": [

{

"position": 1,

"url": "https://docs.microsoft.com/en-us/microsoft-365/",

"label": "Microsoft 365 Documentation",

"description": "Official documentation for Microsoft 365 productivity tools and features..."

},

{

"position": 2,

"url": "https://learn.microsoft.com/en-us/sharepoint/",

"label": "SharePoint Documentation",

"description": "Comprehensive guide to SharePoint for document management and collaboration..."

}

],

"markdown": "**To improve team productivity using Microsoft 365 tools**, I recommend implementing the following strategies...",

"html": "https://storage.cloro.dev/results/c45a5081-808d-4ed3-9c86-e4baf16c8ab8/page-1.html"

}

}Why teams pick cloro for Copilot specifically:

- Cookie-stash pool that survives Microsoft’s eviction. No mid-batch session breaks; the pool is sized to outlast the eviction window.

- WebSocket frame parsing battle-tested against Quick + Smart modes. Both modes’ citation patterns produce identical output shapes from the API.

- Mode-selection keyboard walk maintained centrally. Microsoft tweaks the UI ordering — that’s our integration test, not yours.

- Microsoft-source attribution rate as a first-class field. No need to post-process URLs to compute

microsoft.comshare — it’s returned per response.

Building this in-house typically runs $3,000–6,000/month: residential proxies, WebSocket fleet, the cookie-stash service, and the on-call rotation when Microsoft’s session-eviction window shifts.

If you need a custom build, the protocol map above is the starting point. Expect ongoing work on session-cookie maintenance — Microsoft has tightened the eviction window twice in 2025, and the Quick/Smart mode buttons swapped tab-order positions in the latest UI update.

Get started with cloro’s Copilot API and skip the cookie-stash maintenance.

Frequently asked questions

How does Copilot scraping differ from ChatGPT?+

Copilot relies heavily on WebSocket events for real-time streaming, whereas ChatGPT primarily uses Server-Sent Events (SSE). You need to intercept different network protocols.

Can I scrape Copilot without a Microsoft account?+

It is difficult. Copilot often requires authentication or strict session cookies. Managing these sessions is the hardest part of scraping Copilot.

Is it possible to extract the specific sources Copilot uses?+

Yes, the source URLs are sent in the WebSocket metadata. A good scraper parses these out and links them to the text citations.

What is the WebSocket event parsing challenge in Copilot?+

Copilot sends text and citation events interleaved via WebSocket. The challenge is to parse these real-time events and accurately reconstruct the complete response, including grouping citations.

What makes Copilot responses valuable for businesses?+

Copilot integrates with the Microsoft ecosystem and real-time web search, providing context-aware responses with citations that leverage deep Microsoft product knowledge, offering unique enterprise intelligence.

Related reading

How to scrape ChatGPT: parse SSE streams + bypass Cloudflare

Scrape ChatGPT in 2026: parse the Server-Sent Events stream, expand lazy-loaded citations, and bypass Cloudflare with residential proxies.

How to scrape Google Gemini: parse internal API confidence scores

Scrape Google Gemini in 2026: intercept the internal API protocol, extract confidence-scored structured outputs, and handle Google account session checks.

How to scrape Perplexity: capture citations from event streams

Scrape Perplexity AI in 2026: extract the cited sources block from the SSE stream, parse Related Questions JSON, and handle Cloudflare protection.