How to scrape Perplexity: capture citations from event streams

Perplexity ships its answer as a stream of typed blocks — markdown_block, web_result_block, shopping_block, media_block, hotels_mode_block, maps_mode_block — and the block list depends on which intent classifier won. A shopping query gets a shopping_block with merchant offers. A travel query gets a hotels_mode_block with rated places and lat/lng. The Sonar API exposes none of this; it returns text and a flat citation list.

The trick to scraping Perplexity is recognising which final_sse_message block shape you’re going to get before the answer streams, then parsing the matching block schema. Get the intent wrong and you’ll miss half the structured data.

After parsing 5M+ Perplexity responses at cloro, here’s the block-shape map and the intent-classifier signals.

Table of contents

- Why scrape Perplexity responses?

- Understanding Perplexity’s architecture

- The Server-Sent Events parsing challenge

- Building the scraping infrastructure

- Query intent detection and data extraction

- Using cloro’s managed Perplexity scraper

Why scrape Perplexity responses?

Perplexity’s intent classifier is the differentiator. Ask “best running shoes for marathons” and the response is a shopping_block with eight products, each carrying merchant, price, original_price, rating, num_reviews, and an offers[] array. Ask “hotels near Lake Como” and the same answer slot becomes a hotels_mode_block with lat/lng, address arrays, price_level enum, and image collections. The Sonar API returns text and a flat URL list for both.

What only exists in the SSE block stream:

shopping_block.products[].offers[]— per-merchant pricing, original_price, availability, image URLshotels_mode_block.places[]andmaps_mode_block.places[]— rated places with phone, address, coordinates, price_level, categoriesmedia_block.media_items[]— videos and images with thumbnail dimensions, source platform, durationweb_result_block.web_results[]— citation positions tied back to inline[1][2]markers in the answerrelated_query_items[]— the suggested next queries Perplexity displays under the answer

Use cases the block-stream supports that Sonar can’t:

- E-commerce price monitoring. “Track when iPhone 17 Pro shows up in Perplexity’s shopping_block and what merchants get the offer slot.”

- Hotel/place SERP tracking. “Which hotels rank in Perplexity for ‘best boutique hotels NYC’ over 30 days?”

- Citation rank-tracking. “Where does our docs page sit in the

web_resultslist for branded comparison queries?” - Related-query mining. “What does Perplexity suggest as follow-ups to high-intent buyer prompts?”

For the broader landscape, see our piece on AI Search Engines.

Understanding Perplexity’s architecture

Perplexity stitches together several systems to produce its search results.

Response generation process:

- Query analysis. Classifies search intent (shopping, travel, media, general).

- Search integration. Runs real-time web searches across multiple sources.

- AI synthesis. Uses LLMs to synthesize information with citations.

- Structured extraction. Pulls rich data objects based on intent.

- Streaming response. Delivers results via Server-Sent Events (SSE).

Multi-modal response structure:

// Perplexity combines text, sources, and rich data objects

{

answer: "AI-generated response with citations [1][2]",

sources: ["https://example.com/source1", "https://example.com/source2"],

shoppingCards: [...], // When shopping intent detected

videos: [...], // When media intent detected

hotels: [...] // When travel intent detected

}Server-Sent Events format:

event: message

data: {"final_sse_message": false, "blocks": [{"markdown_block": {"answer": "Hello"}}]}

event: message

data: {"final_sse_message": false, "blocks": [{"markdown_block": {"answer": "Hello world"}}]}

event: message

data: {"final_sse_message": true, "blocks": [...], "web_results": [...]}Query intent detection:

- Shopping queries → Product cards with pricing

- Travel queries → Hotel listings and places

- Media queries → Videos and images

- General queries → Text with citations

Anti-bot detection:

- Request pattern analysis

- Browser fingerprinting

- Rate limiting with exponential backoff

- Dynamic content loading challenges

The Server-Sent Events parsing challenge

Most of the work in scraping Perplexity is parsing the SSE stream and extracting structured data blocks.

SSE event structure:

# Raw Perplexity SSE example

event: message

data: {"final_sse_message": false, "blocks": [{"markdown_block": {"answer": "Recent"}}]}

event: message

data: {"final_sse_message": false, "blocks": [{"markdown_block": {"answer": "developments"}}]}

event: message

data: {"final_sse_message": true, "blocks": [...], "web_results": [...]}Parsing challenges:

- Multi-event streaming. Content arrives across multiple SSE events.

- Final message detection. Only the last event contains complete structured data.

- Block-based structure. Different data types live in separate blocks.

- Mixed content types. Text, sources, media, and structured objects all combined.

Python SSE parsing implementation:

import json

from typing import List, Dict, Any, Optional

def get_last_final_message(sse_response: str) -> Optional[dict]:

"""

Extract the last message with final=true from Perplexity SSE response.

"""

messages = sse_response.strip().split("\n\n")

for message in reversed(messages):

if not message.startswith("event: message"):

continue

# Extract the data line

lines = message.split("\n")

for line in lines:

if line.startswith("data: "):

try:

data = json.loads(line[6:]) # Remove 'data: ' prefix

# Check if this is the final message

if data.get("final_sse_message"):

return data

except json.JSONDecodeError:

continue

return None

def extract_answer_text(final_message_data: Optional[dict]) -> str:

"""

Extract the answer text from the final message data.

"""

if not final_message_data:

return ""

blocks = final_message_data.get("blocks", [])

for block in blocks:

if "markdown_block" in block:

return block["markdown_block"].get("answer", "")

return ""Source extraction from web results:

def extract_perplexity_sources(final_message_data: Optional[dict]) -> List[Dict[str, Any]]:

"""

Extract sources from Perplexity SSE response.

"""

sources = []

if not final_message_data:

return sources

# Extract web_results from blocks

blocks = final_message_data.get("blocks", [])

for block in blocks:

# Check for web_result_block

if "web_result_block" in block:

web_results = block["web_result_block"].get("web_results", [])

for idx, result in enumerate(web_results, start=1):

sources.append({

"position": idx,

"label": result.get("name", ""),

"url": result.get("url", ""),

"description": result.get("snippet") or result.get("meta_data", {}).get("description"),

})

return sourcesBuilding the scraping infrastructure

Perplexity’s stack adds two requirements ChatGPT scraping doesn’t have: an intent-classifier inspector and a block-aware schema dispatcher. Without those you’ll either crash on unexpected blocks or silently drop half the structured data.

Required components for Perplexity specifically:

- Network interceptor scoped to

rest/sse/perplexity_ask— Perplexity dispatches multiple background calls (search hydration, autocomplete, telemetry); only this URL carries the answer stream. - Block-shape dispatcher. Read

final_sse_message.blocks[], branch onmarkdown_blockvsshopping_blockvshotels_mode_blockvsmaps_mode_blockvsmedia_block. Each has a different schema; treat them as a tagged union. final_sse_message: truereader. Intermediate events stream tokens; only the final event carries the complete blocks list. Parse the last event with the flag set, not the last event period.answer_modesandclassifier_results.shopping_intentinspector. Lets you assert “I expected a shopping block, did I get one?” — useful for catching silent classifier flips.- Cloudflare-aware navigation. Perplexity uses Cloudflare similar to ChatGPT but with a tighter rate-limit window per IP. A residential pool with one request per IP per 60s is the safe cadence for batch monitoring.

Complete scraper implementation:

import asyncio

from playwright.async_api import async_playwright, Page

import json

from typing import Dict, Any, List, Optional

class PerplexityScraper:

def __init__(self):

self.captured_responses = []

async def setup_sse_interceptor(self, page: Page):

"""Set up Server-Sent Events interception."""

async def handle_response(response):

# Capture Perplexity SSE responses

if 'rest/sse/perplexity_ask' in response.url:

response_body = await response.text()

self.captured_responses.append(response_body)

page.on('response', handle_response)

async def scrape_perplexity(self, query: str, country: str = 'US') -> Dict[str, Any]:

"""Main scraping function."""

async with async_playwright() as p:

browser = await p.chromium.launch(headless=False)

context = await browser.new_context()

page = await context.new_page()

# Set up SSE interception

await self.setup_sse_interceptor(page)

try:

# Navigate to Perplexity

await page.goto('https://www.perplexity.ai', timeout=20_000)

# Handle any modals or popups

await self.remove_dialogs(page)

# Fill and submit query

await page.wait_for_selector('#ask-input', state="visible", timeout=10_000)

await page.fill('#ask-input', query)

await page.click('[data-testid="submit-button"]', timeout=5_000)

# Wait for SSE response

await self.wait_for_perplexity_response(page)

# Parse the captured response

if self.captured_responses:

raw_response = self.captured_responses[0]

return self.parse_perplexity_response(raw_response)

else:

raise Exception("No SSE response captured")

finally:

await browser.close()

async def remove_dialogs(self, page: Page):

"""Remove any modal dialogs or popups."""

await page.evaluate("""

// Remove all portal elements

const elements = document.querySelectorAll("[data-type='portal']");

elements.forEach(element => {

element.remove();

});

""")

async def wait_for_perplexity_response(self, page: Page, timeout: int = 60):

"""Wait for Perplexity SSE response completion."""

for _ in range(timeout * 2): # Check every 500ms

# Check if we have captured responses

if self.captured_responses:

# Verify response contains final message

final_message = get_last_final_message(self.captured_responses[0])

if final_message:

return

await asyncio.sleep(0.5)

raise Exception("Response timeout after 60 seconds")

def parse_perplexity_response(self, sse_response: str) -> Dict[str, Any]:

"""Parse the raw Perplexity SSE response into structured data."""

# Extract final message data

final_message_data = get_last_final_message(sse_response)

# Extract core content

text = extract_answer_text(final_message_data)

sources = extract_perplexity_sources(final_message_data)

result = {

'text': text,

'sources': sources,

}

# Extract shopping products if shopping intent detected

if has_shopping_intent(final_message_data):

shopping_cards = extract_perplexity_shopping_products(final_message_data)

if shopping_cards:

result['shopping_cards'] = shopping_cards

# Extract media content

media = extract_perplexity_media(final_message_data)

if media['videos']:

result['videos'] = media['videos']

if media['images']:

result['images'] = media['images']

# Extract travel data

if has_places_intent(final_message_data):

hotels_places = extract_perplexity_hotels_and_places(final_message_data)

if hotels_places['hotels']:

result['hotels'] = hotels_places['hotels']

if hotels_places['places']:

result['places'] = hotels_places['places']

# Extract related queries

related_queries = extract_related_queries(final_message_data)

if related_queries:

result['related_queries'] = related_queries

return resultQuery intent detection and data extraction

Perplexity classifies query types and emits matching structured data.

Shopping intent detection:

def has_shopping_intent(final_message_data: Optional[dict]) -> bool:

"""

Check if the response indicates shopping intent.

"""

if not final_message_data:

return False

# Check answer modes for shopping

answer_modes = final_message_data.get("answer_modes", [])

for mode in answer_modes:

if isinstance(mode, dict) and mode.get("answer_mode_type") == "SHOPPING":

return True

# Check classifier results

classifier_results = final_message_data.get("classifier_results", {})

return classifier_results.get("shopping_intent", False)

def extract_perplexity_shopping_products(final_message_data: Optional[dict]) -> List[Dict[str, Any]]:

"""

Extract shopping products from Perplexity response.

"""

shopping_cards = []

if not final_message_data:

return shopping_cards

blocks = final_message_data.get("blocks", [])

for block in blocks:

# Extract from shopping_block

if "shopping_block" in block:

shopping_block = block["shopping_block"]

products = shopping_block.get("products", [])

for product in products:

if isinstance(product, dict):

product_info = {

"title": product.get("name"),

"url": product.get("url"),

"description": product.get("description"),

"price": product.get("price"),

"original_price": product.get("original_price"),

"rating": product.get("rating"),

"num_reviews": product.get("num_reviews"),

"image_urls": product.get("image_urls", []),

"merchant": product.get("merchant"),

"id": product.get("id"),

"variants": product.get("variants", []),

"offers": product.get("offers", [])

}

shopping_cards.append({

"products": [product_info],

"tags": shopping_block.get("tags", [])

})

return shopping_cardsMedia content extraction:

def extract_perplexity_media(final_message_data: Optional[dict]) -> dict:

"""

Extract media items (videos and images) from Perplexity response.

"""

videos = []

images = []

if not final_message_data:

return {"videos": videos, "images": images}

blocks = final_message_data.get("blocks", [])

for block in blocks:

# Extract from media_block

if "media_block" in block:

media_block = block["media_block"]

media_items = media_block.get("media_items", [])

for item in media_items:

if isinstance(item, dict):

media_item = {

"title": item.get("name"),

"url": item.get("url"),

"thumbnail": item.get("thumbnail"),

"medium": item.get("medium", "").lower(),

"source": item.get("source"),

}

# Add image dimensions

for dim_field in ["image_width", "image_height", "thumbnail_width", "thumbnail_height"]:

if dim_field in item:

try:

media_item[dim_field] = int(item[dim_field])

except (ValueError, TypeError):

pass

medium = item.get("medium", "").lower()

if medium == "video":

videos.append(media_item)

elif medium == "image":

images.append(media_item)

return {"videos": videos, "images": images}Travel data extraction:

def extract_perplexity_hotels_and_places(final_message_data: Optional[dict]) -> dict:

"""

Extract hotels and places from Perplexity response.

"""

hotels = []

places = []

if not final_message_data:

return {"hotels": hotels, "places": places}

blocks = final_message_data.get("blocks", [])

for block in blocks:

# Extract from hotels_mode_block

if "hotels_mode_block" in block:

hotel_block = block["hotels_mode_block"]

hotel_places = hotel_block.get("places", [])

for place in hotel_places:

if isinstance(place, dict):

hotel_item = {

"name": place.get("name"),

"url": place.get("url", ""),

"rating": place.get("rating"),

"num_reviews": place.get("num_reviews"),

"address": place.get("address", []) if isinstance(place.get("address"), list) else [place.get("address", "")],

"phone": place.get("phone"),

"description": place.get("description"),

"image_url": place.get("image_url"),

"images": place.get("images", []),

"lat": place.get("lat"),

"lng": place.get("lng"),

"price_level": place.get("price_level"),

"categories": place.get("categories", [])

}

hotels.append(hotel_item)

# Extract from maps_mode_block

elif "maps_mode_block" in block:

maps_block = block["maps_mode_block"]

map_places = maps_block.get("places", [])

for place in map_places:

if isinstance(place, dict):

place_item = {

"name": place.get("name"),

"url": place.get("url", ""),

"address": place.get("address", []) if isinstance(place.get("address"), list) else [place.get("address", "")],

"rating": place.get("rating"),

"lat": place.get("lat"),

"lng": place.get("lng"),

"categories": place.get("categories", []),

"map_url": place.get("map_url"),

"images": place.get("images", [])

}

places.append(place_item)

return {"hotels": hotels, "places": places}Related queries extraction:

def extract_related_queries(final_message_data: Optional[dict]) -> List[str]:

"""

Extract related queries from Perplexity response.

"""

if not final_message_data:

return []

# Extract from related_queries field (preferred source)

queries = final_message_data.get("related_queries", [])

if isinstance(queries, list):

related = [q.strip() for q in queries if isinstance(q, str) and q.strip()]

if related:

return related

# Check related_query_items for text fields

query_items = final_message_data.get("related_query_items", [])

if isinstance(query_items, list):

related = []

for item in query_items:

if isinstance(item, dict):

text = item.get("text")

if isinstance(text, str) and text.strip() and text not in related:

related.append(text.strip())

if related:

return related

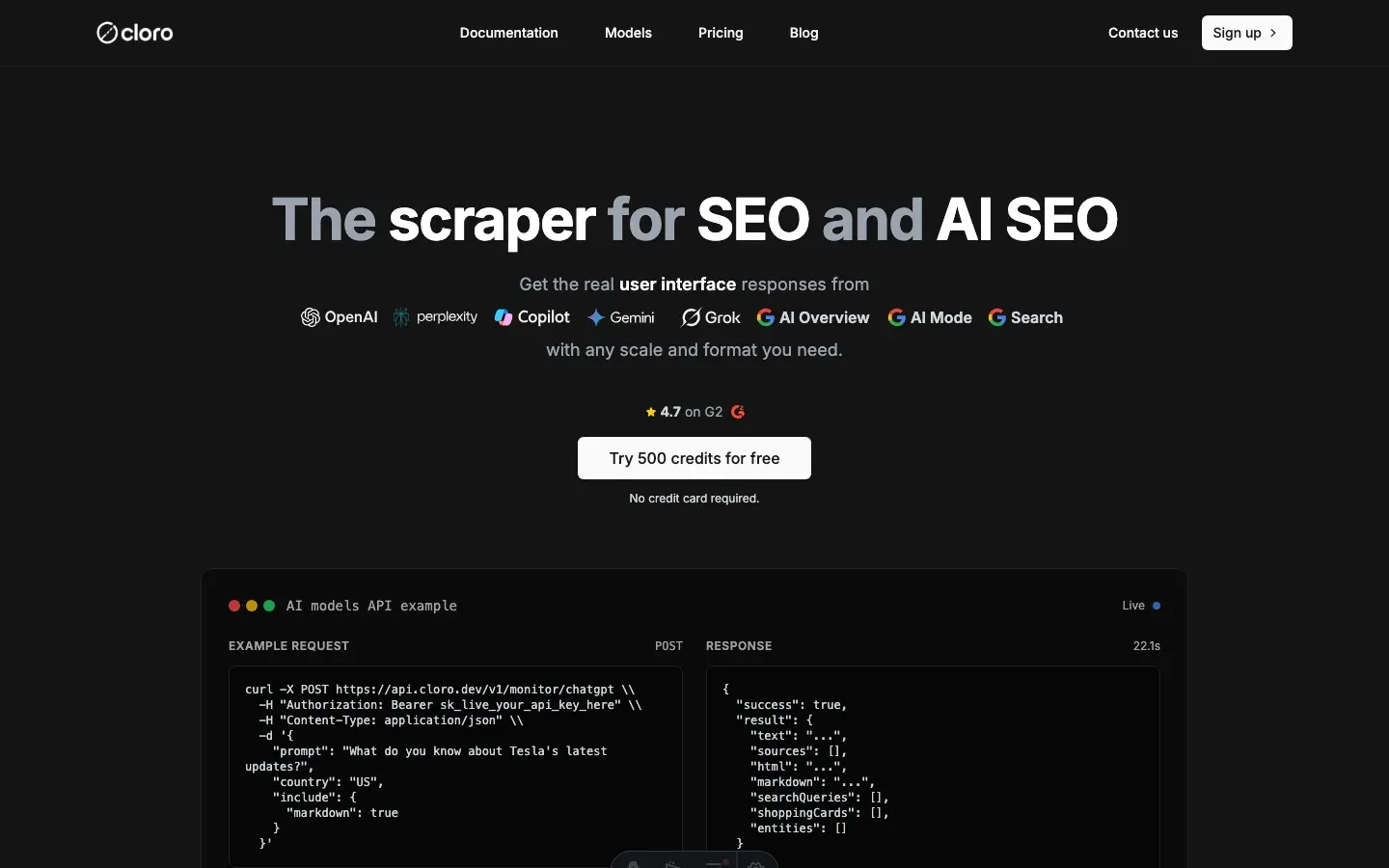

return []Using cloro’s managed Perplexity scraper

The block schemas aren’t documented and they drift. The shopping_block.products[].offers[] shape gained a variants[] field three months ago. The hotels_mode_block adopted a categories[] array. Each change is a parser update plus a schema-test pass. cloro’s /v1/monitor/perplexity endpoint absorbs the schema drift.

Simple API integration:

import requests

import json

# Your search query

query = "What are the latest developments in quantum computing 2026?"

# API request to cloro

response = requests.post(

'https://api.cloro.dev/v1/monitor/perplexity',

headers={'Authorization': 'Bearer YOUR_API_KEY'},

json={

'prompt': query,

'country': 'US',

'include': {

'markdown': True,

'html': True

}

}

)

result = response.json()

print(json.dumps(result, indent=2))What /v1/monitor/perplexity handles for you:

- Block-shape dispatch. Whether the response carries shopping, hotels, maps, or media blocks, the parsed output is normalized into stable top-level keys.

- Intent-classifier signals exposed.

answer_modesandclassifier_results.shopping_intentare surfaced so you can assert what type of answer you got back. shopping_block.products[].offers[]schema-stable. When Perplexity adds fields (variants, original_price, merchant variants), our parser absorbs them; the API contract you call doesn’t change.- Hotels + places normalization.

hotels_mode_blockandmaps_mode_blockare merged into a singleplaces[]output with a discriminator so you don’t branch downstream. - Per-IP rate-limit pacing. Residential session pool tuned for Perplexity’s tighter per-IP cadence.

- Related-query extraction. Both

related_queriesandrelated_query_itemsshapes handled.

Sample structured output:

{

"status": "success",

"result": {

"text": "Recent developments in quantum computing include breakthrough error correction methods...",

"sources": [

{

"position": 1,

"url": "https://example.com/quantum-breakthrough",

"label": "MIT Technology Review",

"description": "Scientists achieve 99.9% qubit fidelity in room temperature conditions..."

}

],

"shopping_cards": [

{

"products": [

{

"title": "Quantum Computing Book",

"url": "https://example.com/product",

"price": "$89.99",

"rating": 4.8,

"num_reviews": 1250,

"image_urls": ["https://example.com/image.jpg"],

"merchant": "TechBooks",

"offers": [...]

}

],

"tags": ["education", "quantum"]

}

],

"videos": [

{

"title": "Quantum Computing Explained",

"url": "https://youtube.com/watch?v=example",

"thumbnail": "https://example.com/thumb.jpg",

"medium": "video",

"source": "youtube"

}

],

"hotels": [

{

"name": "Quantum Research Hotel",

"url": "https://example.com/hotel",

"rating": 4.5,

"address": ["123 Tech Street", "Innovation City"],

"price_level": "$$$",

"categories": ["Hotel", "Business"]

}

],

"related_queries": [

"What companies are leading quantum computing?",

"How does quantum error correction work?"

]

}

}Why teams pick cloro for Perplexity specifically:

- Block-schema versioning is our problem, not yours. When

shopping_block.products[].variants[]showed up, customer code didn’t change. - Intent assertion built in. Pass

assert_intent: "shopping"and the response surfaces a flag for whether the classifier matched expectation — useful for monitoring drift. - Hotels and maps unified. No branching between

hotels_mode_blockandmaps_mode_blockdownstream — both flow into a singleplaces[]output with a discriminator field. - Tight per-IP pacing. Our session pool is tuned for Perplexity’s specific cooldown window — no manual backoff tuning needed.

Building this in-house typically runs $3,000–7,000/month: residential proxies, Playwright fleet, schema-drift monitoring, and the on-call rotation when Perplexity ships an answer-mode change.

If you need a custom build, the block-shape map above is the starting point. Expect schema-drift work — Perplexity has shipped two answer-mode types in the last six months (media got merged into the main flow; places split into hotels vs maps).

Get started with cloro’s Perplexity API and skip the schema-drift maintenance.

Frequently asked questions

How does Perplexity deliver answers?+

Perplexity uses Server-Sent Events (SSE) to stream the answer token by token. You need an event stream parser to capture the final output.

Can I scrape the 'Related Questions'?+

Yes, these are usually sent as a structured JSON object at the end of the event stream.

Does Perplexity block scrapers?+

Yes, they use Cloudflare protection. You need bypass techniques similar to scraping ChatGPT.

What is query intent detection in Perplexity?+

Perplexity automatically classifies the user's intent (e.g., shopping, travel, media) and tailors the response by extracting and presenting specific structured data, such as product cards or hotel listings.

What makes Perplexity responses valuable for businesses?+

Perplexity combines AI reasoning with real-time web search and structured data extraction, providing comprehensive answers with citations, making it valuable for market research and competitive intelligence.

Related reading

How to scrape ChatGPT: parse SSE streams + bypass Cloudflare

Scrape ChatGPT in 2026: parse the Server-Sent Events stream, expand lazy-loaded citations, and bypass Cloudflare with residential proxies.

How to scrape Microsoft Copilot: WebSocket events + auth sessions

Scrape Microsoft Copilot in 2026: intercept the WebSocket protocol, manage Microsoft account session cookies, and extract source URLs from event metadata.

How to scrape Google Gemini: parse internal API confidence scores

Scrape Google Gemini in 2026: intercept the internal API protocol, extract confidence-scored structured outputs, and handle Google account session checks.