Is Web Scraping Legal in 2026? The Definitive Guide (Cases + Rules)

Is website scraping legal? The short answer: it depends. Scraping publicly available data is generally permissible, much like reading a book in a public library. What matters is how you do it. Legality hinges on what data you collect and how you collect it.

Core principles of legal scraping

The distinction is about risk, not just legality. Every data collection team needs to understand the difference between low-risk and high-risk scraping. Think of it as walking through an open front door versus picking the lock.

One is an everyday action. The other bypasses security and invites legal trouble. The analogy frames the strategy: respect boundaries and signals.

What determines scraping risk

The legal risk of a web scraping project sits on a spectrum, and where you fall is influenced by a few factors. Your approach can mean the difference between routine data gathering and a costly legal battle.

To stay on the right side of the law, consider:

- The type of data. Are you collecting public business data like product prices and SEO keywords, or personal data like names, emails, and photos?

- The access method. Are you gathering data openly visible to any visitor, or are you trying to get behind a login, paywall, or CAPTCHA?

- The impact on the website. Is your scraper behaving like a considerate visitor, or is it overwhelming the server with rapid-fire requests?

The most critical distinction in the “is website scraping legal” debate is whether the data is public or private. Courts have consistently ruled that accessing information available to any internet user without a password is not a crime, a precedent reinforced in major legal battles.

Low risk vs. high risk activities

What separates a safe project from a dangerous one? A low-risk approach focuses on public, non-sensitive information and respects the website’s technical infrastructure. High-risk approaches involve personal data, bypassing barriers, and ignoring a site’s rules.

The table below breaks down the factors that determine your project’s risk level.

Legal risk factors in website scraping

| Risk Factor | Low Risk (Generally Permissible) | High Risk (Potential Legal Issues) |

|---|---|---|

| Data Type | Publicly available business data (e.g., SERP results, product prices) | Personal data (names, emails), copyrighted content, data behind a login |

| Access Method | Accessing open, public-facing pages without logging in | Bypassing CAPTCHAs, using stolen credentials, or circumventing IP blocks |

| Website Rules | Respecting robots.txt directives and rate limits | Ignoring robots.txt, aggressively hammering servers, causing downtime |

| Data Usage | Internal analysis, competitive intelligence, SEO monitoring | Republishing copyrighted material, creating a competing commercial product |

With these principles in hand, you can assess your own projects and dig into the specific laws and court cases that define the modern compliance landscape.

How the CFAA defines legal scraping of public data

In the United States, one law dominates the conversation: the Computer Fraud and Abuse Act (CFAA). Passed in 1986 to fight computer hacking, its dated language became the central battleground for data scraping. The whole debate hangs on two words: “unauthorized access.”

For years, companies insisted that if their Terms of Service said “no scraping,” then any scraping was automatically “unauthorized.” That created a legal minefield. Collecting public information could, in theory, be treated as a federal crime. It was like a public park putting up a “no photography” sign. Is taking a picture against the rules, or actual trespassing?

The landmark case: hiQ Labs v. LinkedIn

The tension finally came to a head in a legal showdown that reshaped the scraping world: hiQ Labs v. LinkedIn. HiQ was a small data analytics firm that scraped public LinkedIn profiles to give employers insights into their workforce. LinkedIn hit them with a cease-and-desist letter, claiming this broke the CFAA.

HiQ fought back. They argued the data was public and that LinkedIn was trying to crush a competitor. The fight went all the way to the Ninth Circuit Court of Appeals, which faced a single big question: is looking at data anyone on the internet can see, without a password, the same as “unauthorized access”?

In its 2019 decision, the court sided with hiQ. The logic: if data is publicly accessible and you don’t have to break through a technical barrier to get it, then accessing it isn’t “unauthorized” under the CFAA. The court drew a bright line between scraping and hacking.

The Ninth Circuit’s ruling effectively stated that the CFAA does not provide a legal basis for website owners to unilaterally forbid the scraping of data that is otherwise accessible to the public.

It was a major shift. The CFAA is meant to stop people from bypassing real technical locks (password prompts, CAPTCHAs), not violating a website’s Terms of Service for public information.

What “unauthorized access” really means today

After hiQ, the meaning of “unauthorized access” became clearer for SEO and data teams. The line is no longer a website’s fine print, but a technical gate.

In practice:

- Public data is fair game. Scraping data visible to any anonymous user (public search results, product prices on an e-commerce site, news articles) generally does not violate the CFAA.

- Authentication is the barrier. The moment you need to log in, enter a password, or use credentials to see data, you’re in “unauthorized access” territory if you’re scraping.

- Bypassing technical blocks is a no-go. Actively defeating security measures like CAPTCHAs, IP blocks, or other bot detection systems can be interpreted as gaining unauthorized access.

The distinction matters. Pulling public SERP data for competitive analysis is a world away from scraping a user’s private account behind a login. One is observing what’s in the public square; the other picks the lock on a private building.

The impact has been substantial. Since the hiQ Labs v. LinkedIn ruling established that scraping public data isn’t “hacking,” the precedent has been cited in over 50 subsequent cases. Today, 80% of U.S. federal courts agree that scraping public data is legal as long as no technical barriers are broken. That legal clarity helped fuel innovation, with web scraping startups raising $1.2 billion in venture capital between 2020 and 2024 to build new tools for SEO and market intelligence.

The CFAA is an anti-hacking law, not an anti-scraping one. As long as your scrapers focus on truly public information and you aren’t breaking down digital doors to get it, you’re on solid legal ground.

Drawing the line at personal data and privacy

The CFAA clarifies access to public data, but it’s only one piece of the legal puzzle. The real minefield is personally identifiable information (PII).

Scraping public business data like product prices is one thing. Harvesting data tied to individuals is governed by a maze of unforgiving privacy laws.

Grabbing public prices is like taking notes in a public market. Scraping personal data is like installing a hidden camera in that market to record everyone’s faces. One is research; the other is a privacy breach with severe legal exposure.

For any SEO or data team, understanding this boundary is non-negotiable.

The Clearview AI cautionary tale

No case illustrates the danger better than Clearview AI. The company built a facial recognition empire by scraping billions of photos from public social media profiles. They then sold access to that database to law enforcement and private companies, sparking a global firestorm.

The backlash was swift. Clearview AI didn’t just bend a site’s Terms of Service; they shattered people’s expectation of privacy. That triggered a global crackdown, especially under Europe’s GDPR.

Clearview AI’s downfall cemented a core principle of modern data privacy: just because data is publicly visible doesn’t mean it’s free for any and all use. Consent is king. Without it, collecting personal data is a high-stakes gamble you’re likely to lose.

The global legal backlash

Regulators around the world didn’t hesitate. They hit Clearview AI with massive fines and demanded data deletion. The case became the textbook example of how data protection laws apply to information scraped from the public web.

The numbers are stark. The company’s scraping of over 30 billion facial images from public sites resulted in more than €91 million in fines across 15 jurisdictions by 2025. The case underscores broader risk: 75% of scraping lawsuits since 2020 now involve privacy violations. A 2024 Gartner survey found 68% of enterprises had already shut down personal data scraping projects, shifting to anonymized signals instead.

For a deeper dive into data handling rules, see this Australian Privacy Principles (APPs) guide.

Lessons for SEO and data teams

The Clearview AI saga is a set of hard-learned lessons worth carving into your compliance strategy.

- Avoid PII. Unless you have explicit consent and a clear legal basis, don’t scrape names, emails, phone numbers, or photos. Even usernames are risky if they can be linked back to a real person.

- “Public” doesn’t equal “permissible.” A photo on a public social media profile doesn’t grant a free pass to scrape, store, and build a commercial product with it.

- Understand global privacy laws. Regulations like GDPR (Europe), CCPA/CPRA (California), and LGPD (Brazil) have long arms. If you scrape data belonging to their citizens, their laws apply to you, regardless of where your office is.

For SEO and AI teams, the message is clear: stick to clean, compliant data sources. Focus on non-personal information like SERP features, product specs, or business listings. The reward from scraping personal data doesn’t justify the legal and financial risk.

Understanding terms of service and copyright law

When you’re scraping websites, hacking laws like the CFAA aren’t the only concern. Two other legal areas can create serious headaches: a site’s Terms of Service (ToS) and copyright law.

Neither is a criminal statute, but both can land you in civil court facing lawsuits and financial penalties.

Are terms of service legally binding?

Ignoring a website’s ToS isn’t a federal crime, but it can get you sued for breach of contract. The battleground shifts from criminal to civil court. If a company can prove you agreed to their rules and then broke them, they can pursue damages.

The real question is whether you actually “agreed” to their terms. Courts take a fairly clear stance, and it comes down to how the terms are presented.

-

Clickwrap agreements. These are the strongest and most enforceable. You’ve seen them: the checkbox you tick or the “I Agree” button you click to proceed. That action creates a binding contract. Scraping after clicking “I Agree” to a ToS that forbids it is playing with fire.

-

Browsewrap agreements. The far more common (and legally weaker) setup. The ToS is just a link, usually in the footer. The argument is that by using the site, you’ve implicitly agreed. Courts are often skeptical.

For a browsewrap agreement to be enforced, the site owner must show that a user had “actual or constructive knowledge” of the terms. If the link is buried and you never even saw it, it’s tough to argue that a contract was ever formed.

That distinction matters for scrapers. Many sites with publicly available data use browsewrap agreements, which makes a breach-of-contract suit harder to win, especially when your scraper hits the site anonymously and never interacts with the ToS link.

Copyright law and scraped data

The next hurdle is copyright. This is where a lot of people get tripped up. Is scraping a website the same as stealing their content? Not always.

Copyright protects creative expression, not raw facts.

Think of a cookbook. The ingredient list for a chocolate cake (2 cups flour, 1 cup sugar) is factual. It isn’t protected by copyright, and anyone is free to use it.

But the written instructions, the story about grandma’s secret technique, the food photography, and the book’s layout are creative expression. Copying that word-for-word is a clear copyright violation.

Note that scraping content can also lead to an Intellectual Property Violation if not handled carefully.

How copyright applies to SEO data

The split between facts and expression is directly relevant to scraping for SEO. Two common scenarios:

- Scraping SERP data. When you scrape a Google results page, you’re mostly collecting facts. Page titles, URLs, and meta descriptions are individual data points. Google’s SERP layout has some creative elements, but you’re extracting the underlying facts for analysis. Low risk.

- Scraping a competitor’s blog. If you scrape every article from a competitor’s blog and republish on your own site, you’ve crossed a bright red line. That content is protected creative expression. Reproducing it is textbook copyright infringement.

Your purpose matters too. Using factual data for internal analysis, building a competitive intelligence dashboard, or powering an analytics tool is different from republishing copyrighted content publicly.

Rule of thumb: pull the raw facts, not the creative container they’re packaged in.

Practicing ethical scraping to avoid trouble

So far we’ve focused on what’s legally allowed. There’s another layer: ethics. Staying on the right side of the law is the starting line. Ethical scraping means being a good internet neighbor and respecting the technical rules of the road.

It’s also practical. Aggressive scraping is the fastest way to attract legal threats, trigger technical blocks, and tarnish your reputation, even when the data you’re collecting is public.

A few simple principles let you gather data responsibly and build a sustainable pipeline.

Respecting robots.txt directives

Your first stop should be the robots.txt file. It’s a plain text file that website owners place in the root directory to give instructions to crawlers.

Think of it less as a legal wall and more like a “Please Keep Off the Grass” sign. Hopping the fence isn’t a crime, but ignoring the sign signals clear disrespect.

The

robots.txtfile is your guide to what the website owner considers acceptable for bots to access. While it’s not legally binding, ignoring it is the fastest way to get your IP address blocked and be labeled as a “bad bot.”

A typical robots.txt file might look like this:

User-agent: *applies the following rules to all bots.Disallow: /private/tells bots not to crawl URLs in the/private/directory.Allow: /public/explicitly permits crawling of the/public/directory.

Check and honor these directives. It’s a simple sign of good faith that prevents avoidable conflicts.

Rate limiting

The second pillar of ethical scraping is rate limiting: making requests at a reasonable, human-like pace. This is the most important part of being a responsible scraper.

One person walks into a store to browse. Normal. A flash mob of 1,000 people storms the entrance at once. That’s a shutdown.

Hammering a server with hundreds or thousands of requests per second is the digital equivalent. It devours bandwidth, slows the site for real users, and can crash the server. That causes real financial damage, and the business will act to stop you.

To avoid that, build delays between requests. Some practices:

- Introduce random delays. Don’t wait a fixed two seconds between requests; vary the timing to mimic real browsing.

- Scrape during off-peak hours. Run scrapers late at night when there are fewer real visitors.

- Identify yourself. Use a clear User-Agent string in your scraper’s headers (e.g., “MyCoolSEOToolBot/1.0”) so site owners can contact you if there’s a problem.

Ethical scraping is about building a reputation for responsible data collection. Respecting a site’s rules and technical limits keeps the door open for legal scraping for everyone.

A practical compliance checklist for scraping

Knowing the theory of scraping law is one thing. Practicing it is another. You need a repeatable process to gut-check project risk before a line of code gets written.

This isn’t legal advice. Think of it as a pre-flight checklist for engineering and SEO teams. Asking these questions upfront builds a culture of compliance and creates the documentation to defend your decisions if needed.

Data access and content type

Start with the “what” and the “how.” The type of data and the way you reach it drive most of your legal risk.

-

Is the data behind a login or paywall?

- Question: Do you have to enter a username, password, or any credential to see it?

- Action: If yes, stop. Accessing data behind an authentication wall without explicit permission is a fast track to an “unauthorized access” claim under the CFAA. Stick to information that’s truly public.

-

Does the data contain Personally Identifiable Information (PII)?

- Question: Are you pulling names, emails, phone numbers, addresses, or user photos?

- Action: If yes, be exceptionally careful, or skip it. Scraping PII puts you in the crosshairs of privacy laws like GDPR and CCPA. Focus on anonymous business data like product prices or SERP features instead.

-

Is the content protected by copyright?

- Question: Are you extracting raw facts (prices, specs, URLs) or creative works (entire articles, user reviews, photographs)?

- Action: Target facts, not expression. Copying factual data for internal analysis is low risk. Republishing someone else’s copyrighted blog post or photo gallery is textbook infringement.

Technical and ethical considerations

Next, your technical footprint. How you scrape matters as much as what you scrape. Aggressive scraping is the quickest way to get your IP blocked and attract a cease-and-desist letter.

A responsible scraper acts more like a polite guest than a disruptive intruder. By respecting the website’s rules and technical limits, you minimize conflict and ensure your access isn’t cut off.

-

What does the

robots.txtfile say?- Question: Have you checked the target site’s

robots.txtforDisallowdirectives on the pages you want to crawl? - Action: Follow the rules. A

robots.txtfile isn’t legally binding, but ignoring it signals bad faith and gets your scraper detected and blocked. It also looks bad if a dispute ever escalates.

- Question: Have you checked the target site’s

-

What is your scraping rate?

- Question: Are you hitting the server at machine-gun speed, or pacing requests like a human?

- Action: Slow down. Implement rate limiting and add random delays. Hammering a server can slow it down or crash it, opening you up to a lawsuit for financial damages. Our guide to large-scale web scraping covers the best practices in depth.

Web scraping compliance checklist

Before kicking off any new scraping project, run through this checklist with your team. It turns abstract legal concepts into concrete action items and forces a deliberate risk assessment from the start.

| Checklist Item | Assessment Question | Action/Mitigation |

|---|---|---|

| Authentication Gate | Does the data sit behind a login, paywall, or other access control? | If Yes, stop. Do not proceed. This is a clear CFAA risk. |

| Personally Identifiable Information (PII) | Does the data include names, emails, phone numbers, or user photos? | If Yes, avoid scraping or consult a privacy expert. High risk under GDPR/CCPA. |

| Copyrighted Content | Are you scraping creative works (articles, images) or factual data (prices, specs)? | Focus on facts. Republishing creative works is a high copyright risk. |

| Terms of Service (ToS) | Have you reviewed the website’s ToS for explicit bans on scraping? | If Yes, scraping is a breach of contract risk. Assess business need vs. legal risk. |

robots.txt Directives | Does the robots.txt file Disallow crawling of the target URLs? | Honor all Disallow rules. Ignoring them signals bad faith and risks getting blocked. |

| Scraping Rate | What is the planned request rate? Is it aggressive? | Implement rate limits and randomized delays to mimic human behavior and avoid server strain. |

| Data Usage | What is the end use? Internal analysis, republication, or commercial product? | Internal analysis is lowest risk. Republication is highest risk. |

| Data Value | What is the value of this data? Is it worth the potential legal and technical risk? | Document the business case to justify the project against the assessed risks. |

Making this checklist a mandatory first step ensures every project starts with a clear view of the hurdles. It’s a simple way to scrape more responsibly and protect your business.

Frequently asked questions about scraping legality

The legal side of scraping can feel like a minefield. Quick answers to the most common questions from SEOs, engineers, and data teams:

Is scraping a competitor’s prices illegal?

Generally, no. Scraping publicly available prices is a common, low-risk part of competitive intelligence. Prices are facts, not creative works protected by copyright.

As long as prices are visible to any visitor without a login and you aren’t hammering their servers, you’re on solid ground. The risk creeps in if you have to click “I Agree” on a ToS that bans scraping before you can see the prices.

What if a website’s robots.txt says “Disallow”?

The robots.txt file is not a legally binding contract. Ignoring it won’t get you hauled into court for hacking under the CFAA.

It’s like a “No Trespassing” sign on an open field next to a public park. You probably won’t be arrested for walking across, but you’re knowingly ignoring the owner’s wishes. That makes you a “bad bot” and is the fastest way to get your IP blocked. Respect robots.txt as a matter of professional conduct.

Can I get sued for breaching terms of service?

Yes, you can be sued for breach of contract. Whether the website owner can win is another question, and it usually comes down to how you “agreed” to their terms.

- Clickwrap agreement. If you checked a box or clicked “I Agree” to the terms before accessing the data, their case is strong. You actively consented.

- Browsewrap agreement. If the ToS was just a link in the footer that you never saw or clicked, their case is much weaker. Courts are skeptical that you can be bound by a contract you never knew existed.

The decision tree below maps the risk factors for any scraping project at a glance.

The real trouble in any scraping legal analysis comes from accessing private data or breaking clear technical rules, not from gathering public information.

Navigating the complexities of data collection for AI and SEO requires a reliable partner. cloro provides a high-scale scraping API that delivers structured, compliant data from top search and AI assistants, eliminating legal guesswork and technical overhead. Get the clean, consistent data you need to power your workflows without the risk. Start with 500 free credits at cloro.dev.

Related reading

Best web scraping tools for 2026

From Python libraries to AI-powered APIs. A comprehensive guide to the best web scraping tools for developers and marketers in 2026.

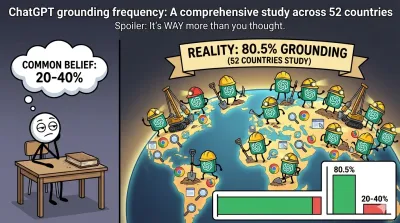

ChatGPT uses web search in 80%+ of the prompts

Independent testing reveals ChatGPT uses web search and grounding in 81% of responses—much more frequently than the commonly believed 20-40%. We tested 5,200 queries across 52 countries to measure organic behavior.

Best ChatGPT scraper tools for 2026: extract the unextractable

The official API doesn't show you what users see. Here are the best tools to scrape the ChatGPT web interface, parse citations, and track brand mentions.